Computer Vision Engineer Staffing

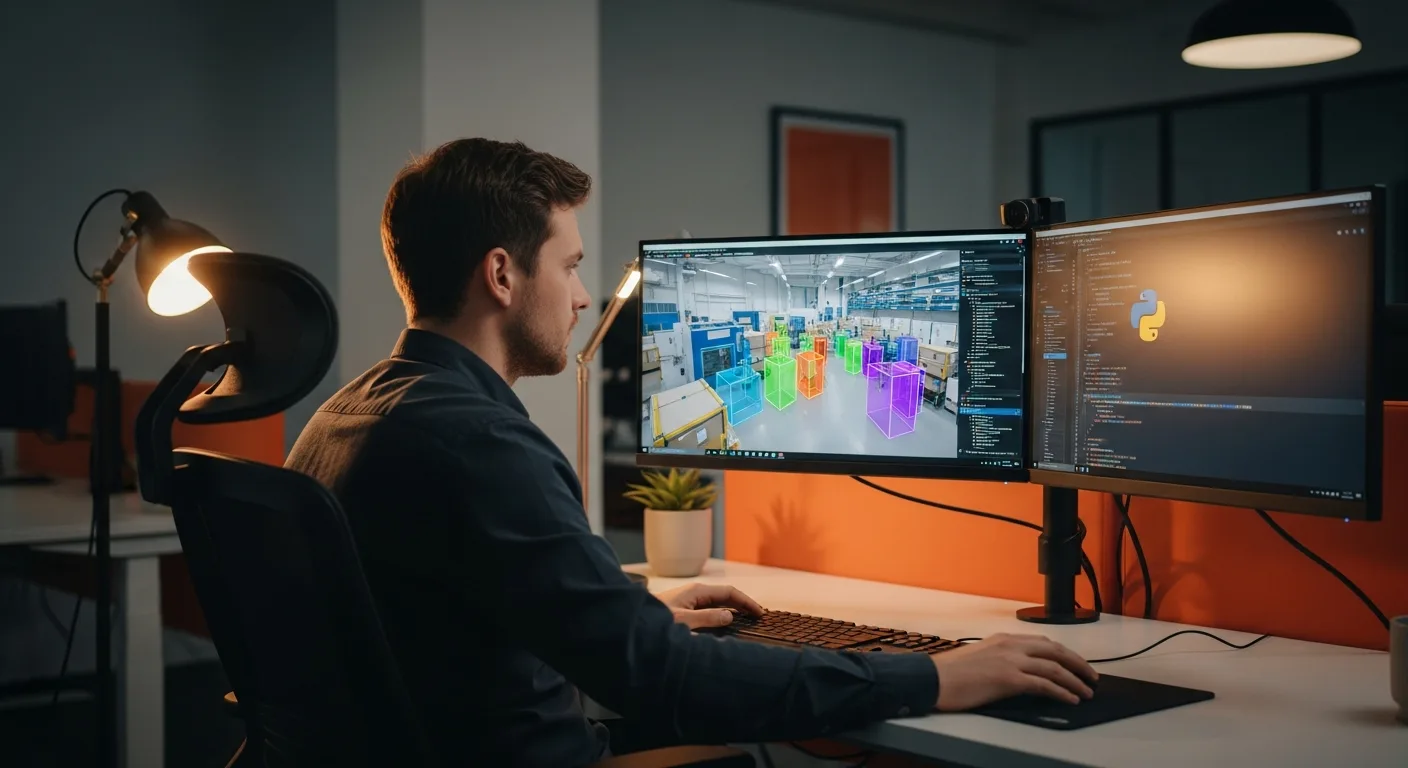

Most “computer vision engineers” on LinkedIn never shipped a production inference pipeline. We vet the ones who did. From YOLO to TensorRT, KORE1 sources CV engineers who know the full stack — not just the theory.

Last updated: April 30, 2026

KORE1 specializes in computer vision engineer staffing, placing PyTorch, OpenCV, and YOLO engineers in an average of 17 days with a 92% 12-month retention rate across contract, direct hire, and project engagements.

Computer Vision Hiring Is Broken — and Most Recruiters Don’t Know Why

The title “computer vision engineer” covers an enormous range. On one end, there’s the researcher fine-tuning ViT architectures on a GPU cluster. On the other, there’s the engineer deploying a real-time detection pipeline on edge hardware with a 30ms latency budget, integrating camera calibration, ONNX export, TensorRT optimization, and production monitoring into a single owned system. Completely different roles. Most recruiters can’t tell them apart.

KORE1’s computer vision engineering practice sits inside our broader AI/ML engineer staffing service, which means clients get recruiters with technical depth across the full AI stack — not just CV specialists who can’t evaluate the surrounding infrastructure. We’ve placed CV engineers in autonomous vehicle perception stacks, robotic inspection lines, FDA-regulated medical imaging platforms, physical security analytics, and brick-and-mortar retail heatmapping systems. We don’t match keywords. We match context.

If you’re sourcing for IT and tech talent beyond CV, our full practice covers data scientists, MLOps, cloud, and DevOps.

Roles We Place in Computer Vision

Three of the last five computer vision searches we ran closed in under 14 days. One took 38 days because the client was building a medical imaging platform and needed CUDA kernel optimization experience alongside DICOM parsing knowledge and an understanding of FDA Class II software development constraints — a combination that shrinks the available pool to maybe 40 people nationally with active job interest. Specificity like that takes a different kind of search. We know how to run it.

- Computer Vision Engineer

- 3D Vision / Depth Sensing Engineer

- Real-Time Inference Engineer

- Edge AI / Embedded Vision Engineer

- Medical Imaging Engineer

- Autonomous Perception Engineer

- CV Research Engineer

- MLOps Engineer (CV pipelines)

- Applied Computer Vision Scientist

- Robotics Vision Engineer

Need a direct hire or a contract engagement? We do both, and we can tell you which is more common for CV roles at your company’s stage.

Hire the Way That Makes Sense for Your Project

Not every computer vision search looks the same. A six-month model rebuild isn’t the same as a permanent head of perception. We match the engagement model to the actual work.

Contract

Bring in a CV engineer for a defined build cycle, a model sprint, or interim pipeline coverage without long-term headcount pressure.

Contract-to-Hire

Try before you commit. CV engineering roles carry real ramp cost — contract-to-hire protects both sides and improves retention.

Direct Hire

For senior perception leads and staff-level CV architects where long-term team fit and deep domain alignment are the whole point.

Project-Based

Access focused CV expertise for a proof-of-concept, model audit, or production deployment without building a permanent team first.

How KORE1 Fills Computer Vision Roles

Scope the role, not just the title

We dig into whether you need training, inference, edge deployment, or all three. The intake call shapes everything that follows.

Deliver technically vetted candidates

Every CV candidate is screened by recruiters who know the difference between a pretrained model wrapper and someone who’s built a pipeline from scratch.

Support the hire beyond day one

We stay engaged after placement. If something shifts, we move fast to protect your timeline.

Sources & References

- Stanford AI Index — CV research output, model performance, and talent demand.

- OpenCV — Open Source Computer Vision Library — Foundational library across most production CV systems.

Common Questions

What skills separate a real computer vision engineer from someone who just knows the basics?

A production-ready computer vision engineer can optimize inference latency, deploy models to edge hardware, and write custom CUDA kernels when off-the-shelf TensorRT isn’t fast enough — and critically, they can tell you which of those is actually the bottleneck before writing a line of code, which saves a week of GPU time on the wrong problem. The gap between someone who fine-tuned a YOLO model in a Colab notebook and someone who shipped a real-time detection system running at 60fps on an NVIDIA Jetson is enormous. We focus on that gap in every search we run. LinkedIn doesn’t surface it. Screening calls do.

How long does it take to hire a computer vision engineer?

KORE1’s average time-to-hire for computer vision engineers is around 17 days. That’s across all engagement types. Senior roles that require a rare combination — say, real-time stereo vision plus medical device regulatory experience — can run 4 to 6 weeks. Contract roles with a narrower scope tend to close faster, sometimes inside 10 days when we have the candidate already active in our network.

What’s the difference between a computer vision engineer and an ML engineer?

Computer vision engineers specialize in image and video data — object detection, segmentation, pose estimation, depth sensing, and real-time video analytics. ML engineers work across modalities and are often more focused on model training pipelines, feature engineering, and production serving at scale. The overlap is real, but the depth differs. A CV engineer who’s never touched a camera sensor isn’t the same as one who’s debugged color space artifacts in a production system at 2am. Job titles rarely capture that.

What do computer vision engineers earn in 2026?

Mid-level computer vision engineers typically earn $145,000 to $185,000 base salary. Senior and staff-level engineers — particularly those with autonomous systems, medical imaging, or LiDAR-camera fusion experience at a major AV program — often push $200,000 to $260,000, with some outlier staff roles at Waymo, Tesla, and Apple clearing $300,000 in total comp when RSUs are included. Contract rates tend to run $85 to $135 per hour depending on specialization and location. According to the U.S. Bureau of Labor Statistics 2024 Occupational Outlook, software developer and engineer roles remain among the highest-compensated occupations nationally, and CV specialists with production deployment experience consistently benchmark above the median for their job family.

What industries hire the most computer vision engineers?

Autonomous vehicles and robotics are the most active verticals right now — both require perception engineers who can work with LiDAR, radar, and camera fusion simultaneously, understand sensor calibration pipelines, and write C++ fast enough to meet deterministic real-time requirements that Python simply can’t satisfy at scale. Medical imaging is a close second, with FDA regulatory overlay adding another layer of difficulty because candidates need to understand IEC 62304 software lifecycle requirements alongside their imaging expertise. Retail analytics, manufacturing quality inspection, and security surveillance round out the common hiring categories. Each vertical has different stack preferences: AV leans C++ and ROS, medical leans Python and DICOM, manufacturing often involves proprietary GigE Vision systems.

Can KORE1 fill contract computer vision roles fast?

Yes, and contract is often our fastest CV search because we maintain an active bench of computer vision engineers between assignments who have cleared our technical screen and are available for immediate deployment. When the scope is clear and the rate is market-aligned — meaning you’re not asking a $120/hr TensorRT engineer to work for $75 because it’s “just a contract” — we’ve delivered vetted candidates in as little as 4 to 7 business days. The faster fills happen when clients can answer two questions upfront: what inference target are you optimizing for, and what’s the deployment environment. That narrows the field significantly.

What is the difference between a computer vision engineer and an ML engineer?

Computer vision engineers specialize in image and video data — object detection, segmentation, pose estimation, depth sensing, and real-time video analytics. ML engineers work across modalities and focus more on model training pipelines, feature engineering, and production serving at scale.

Ready to Hire

Find Computer Vision Engineers Who’ve Shipped Real Systems

Tell us the role, the stack, and the timeline. We’ll have candidates in front of you — not resumes, candidates.

Start Your Search →