Hiring AI engineers in 2026 means navigating a market where average salaries have climbed past $200,000, job postings nearly doubled year over year, and companies routinely lose top candidates inside of three weeks. This guide covers real salary benchmarks by specialization, the technical skills that matter for production AI work, common hiring mistakes, and when working with a specialized staffing partner makes sense.

We had a client last quarter who spent four months trying to hire a machine learning engineer. Four months. They posted on LinkedIn, ran ads on Indeed, had their internal recruiter reaching out to people. Nothing stuck. The candidates they liked ghosted them after round two. The ones who made it to the offer stage turned them down flat.

Turns out their budget was pegged to 2023 salary data. They were offering $145K for a role the market had repriced to $190K while they weren’t looking.

That story isn’t unusual. We hear some version of it almost every week now. AI hiring has gotten weird, expensive, and fast in ways that catch people off guard. The candidate pool is small. The money is enormous. And the gap between someone who can tinker with a model in a Jupyter notebook versus someone who can actually ship a production ML system? That gap costs companies real money when they can’t tell the two apart during interviews.

So here’s what the market really looks like. Not the LinkedIn thought leadership version. The version we see from inside hundreds of searches a year.

What’s Going On in the AI Talent Market

The headline numbers are wild. AI and machine learning job postings jumped 89% in the first half of 2025 compared to the same stretch the year before. The global AI market is barreling toward $1 trillion by 2027. Analysts keep throwing around the figure of 97 million new AI-related jobs worldwide.

But numbers like that don’t help you fill the role sitting open on your team right now.

What actually matters is this. The type of AI work companies need done has changed fundamentally. Back in 2023, lots of companies were running pilot programs. Proof of concept stuff. Cool demos that got the board excited. Now those same companies want to turn those pilots into production systems that customers actually touch. And that requires a totally different breed of engineer.

Finding someone to build a chatbot prototype? Relatively straightforward. Finding someone who can fine-tune a large language model on your proprietary data, wire it into your existing infrastructure, build monitoring around it, and keep it from hallucinating in front of your customers? That person is expensive. And they’ve got six recruiters in their inbox already.

Over 75% of AI job postings now specifically call out deep domain expertise. Generalists are getting squeezed out. Specialists with the right niche pull salaries 30% to 50% above generalists at the same level. The talent gap isn’t closing. If anything, it’s getting wider because entirely new job categories, things like agentic AI systems and RAG architecture, didn’t even exist as disciplines three years ago.

AI Engineer Salaries in 2026

Compensation in AI has reached a point where it genuinely surprises people who’ve worked in tech for decades. The average AI engineer salary crossed $206,000 in 2025. That was a $50,000 jump from the prior year. Not a typo. Fifty thousand dollars in a single year.

And 2026 is trending higher still.

General ranges across experience levels

Entry level, meaning zero to two years of real work experience, runs $120K to $150K. That’s entry level. For AI. Mid-career engineers with three to five years are landing between $150K and $220K depending on the specialization and the city. Senior folks with six-plus years? Anywhere from $200K to north of $312K. And then there are the research scientists at places like DeepMind or Anthropic pulling in $250K to $489K or more. Those numbers include equity, obviously, but still.

Specialization is where the real spread shows up

The generic title “AI engineer” is becoming less useful. What drives compensation now is the specific type of work someone does.

| Specialization | Mid-Level Range | Senior / Top-End | What We’re Seeing |

|---|---|---|---|

| Machine Learning Engineer | $149K – $219K | $230K+ | 9% year-over-year increase, one of the biggest jumps in all of tech |

| LLM / Generative AI Specialist | ~$174K average | $300K+ at leading labs | Hottest category, custom model fine-tuning work commands insane premiums |

| NLP Engineer | $170K – $188K | $220K+ | Most requested AI skill on the market, shows up in nearly 20% of all AI postings |

| Deep Learning Engineer | ~$212K average | $280K+ | Highest average comp in AI per recent industry benchmarks |

| Computer Vision Engineer | $160K – $200K | $240K+ | Manufacturing, healthcare imaging, and security keep demand steady |

Geography still plays a role but honestly less than it did two years ago. San Francisco and New York anchor the top end. Remote work reshuffled the deck for mid-market companies. But even remote, you’re competing against the gravitational pull of Google, Meta, Amazon, and the big AI labs who can throw total comp packages that most companies simply can’t match. That doesn’t mean you can’t win candidates. Plenty of talented AI engineers actively don’t want to work at those places. You just need to understand what you’re up against and position your offer around the things Big Tech can’t or won’t provide.

The Skills That Actually Matter When You’re Screening Candidates

This section could easily turn into a laundry list of buzzwords. I want to avoid that. Instead, here’s what we genuinely screen for when we’re placing AI and ML engineers for clients.

The stuff that’s non-negotiable

- Python. Still the lingua franca of AI work. But we look for engineers who write clean, maintainable code with real software engineering habits. Not just notebook scripting. There is a massive difference between those two things and it shows up fast in production.

- Deep learning frameworks. PyTorch dominates research work and is gaining ground in production too. TensorFlow still has a presence in deployed systems. Strong candidates usually know both but have a preference. That’s fine.

- ML fundamentals. Regression, classification, clustering, feature engineering, model evaluation. The basics. Tools get flashier every quarter but these core concepts haven’t changed and won’t anytime soon.

- MLOps and production deployment. Cannot overstate how important this is. CI/CD pipelines for ML, containerization with Docker and Kubernetes, model monitoring, automated retraining. This is where AI projects go to die or to actually make money. If your candidate can’t speak to production deployment with specifics, that’s a problem.

- Cloud platforms. AWS, Google Cloud, or Azure ML services. They need working knowledge of at least one. Enough to deploy models, manage training jobs, and scale inference without calling the cloud team for every little thing.

Where the premium salaries live right now

LLM fine-tuning and RAG architecture. This is on fire. Companies are done with slapping a ChatGPT API call into their product and calling it AI. They want custom models trained on their own data. Engineers who know LoRA, QLoRA, retrieval-augmented generation pipelines, and vector databases like Pinecone or Weaviate are basically setting their own price. We had a candidate last month field three offers over $200K for this exact skill set. Mid-career. No PhD.

Agentic AI. Building AI systems that can plan and execute multi-step tasks on their own. This barely existed as a job category two years ago. Now it’s everywhere. The salary growth on agentic AI roles has been the steepest we’ve tracked across any AI subcategory. If you need this capability, start the search early because the supply is tiny.

Computer vision. CNNs, object detection, image segmentation. Manufacturing QC, medical imaging, security systems, autonomous vehicles. Steady demand. Not as hyped as LLM work right now but the need is consistent and the pay reflects it.

NLP. Transformers (BERT, GPT, T5), named entity recognition, sentiment analysis, text classification. The folks building chatbots, document processing, content generation. NLP is the single most requested AI skill on the market. About 20% of all AI job postings mention it.

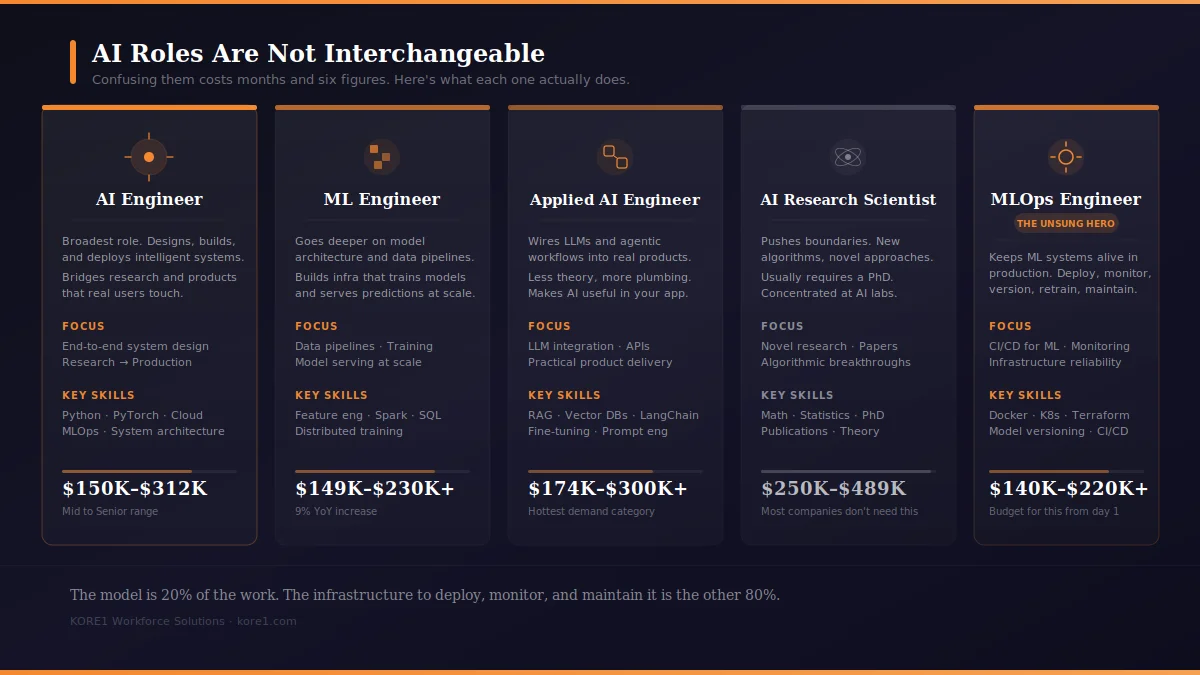

These Roles Are Not the Same (and Confusing Them Gets Expensive)

One thing that trips up hiring managers constantly. The job titles in AI all kind of sound alike. But the actual work is very different. Putting someone in the wrong seat wastes months and six figures.

Quick rundown so this is clear.

AI Engineers are the broadest category. They design, build, and deploy intelligent systems. They bridge the messy gap between research and products that real users touch.

Machine Learning Engineers go deeper on model architecture and data pipelines specifically. Building infrastructure that lets models train efficiently and serve predictions at scale. Lots of overlap with AI Engineer, but ML Engineers tend to live closer to the data.

Applied AI Engineers focus on wiring LLMs and agentic workflows into actual products. Practical integration. Less theory, more plumbing. If you need someone to take a foundation model and make it do something useful inside your app, this is who you want.

AI Research Scientists are the ones pushing boundaries. New algorithms. Novel approaches. Usually requires a PhD. Concentrated at AI labs and big tech. Unless you’re running a research division, you probably don’t need this role. And candidly, you probably can’t afford one.

MLOps Engineers are the unsung heroes. They keep ML systems alive in production. Deployment, monitoring, model versioning, infrastructure maintenance. Every company that’s ever had a model work beautifully in testing and then crash in production needed an MLOps engineer. They just didn’t know it yet.

What Actually Works for Hiring AI Talent

We’ve placed hundreds of AI engineers over the past few years. Some of those searches went beautifully. Some were painful. Here’s what separates the wins from the disasters.

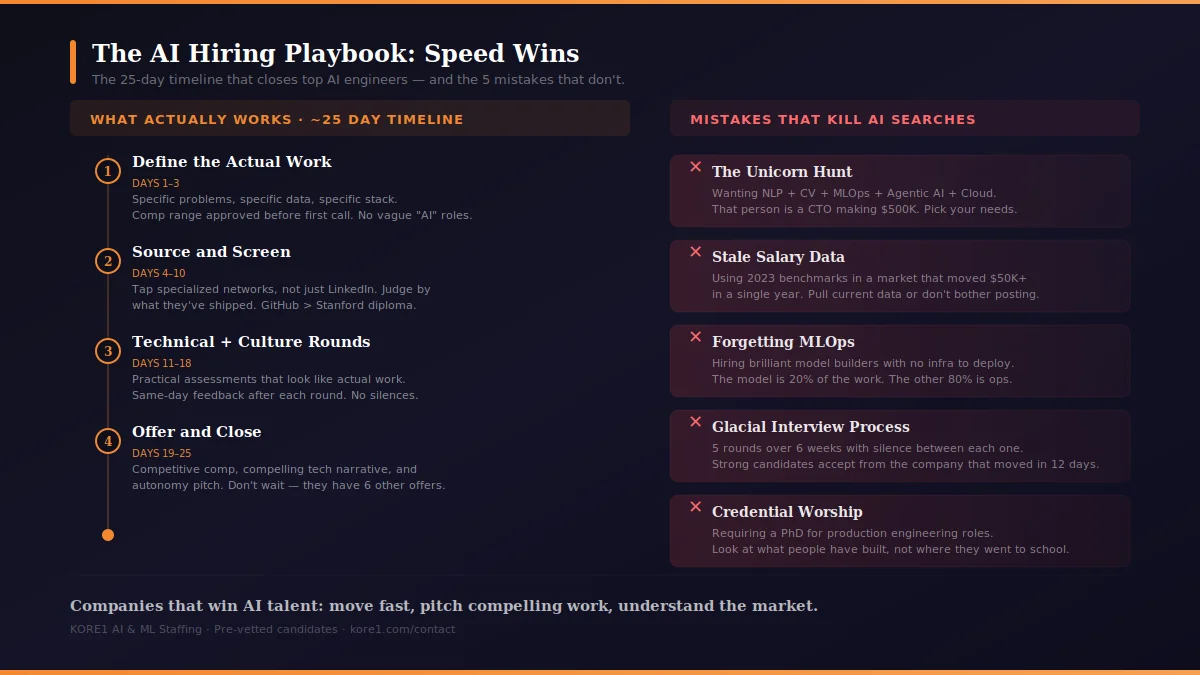

Speed wins. Period.

Top AI candidates receive multiple offers within days of starting to look. The average fill time for AI roles recently dropped to 25 days because smart companies have figured out that a drawn-out process is the same as a rejection. If your interview timeline stretches past three weeks, you are almost certainly losing people to someone who moved faster. Compress your rounds. Give same-day feedback after interviews. Have your compensation range approved before you make the first call. Indecision is the biggest competitor you have and it doesn’t even buy you lunch.

Be specific about the work

Every company’s job posting says they’re doing “cutting-edge AI.” That phrase means nothing at this point. AI engineers want specifics. What data will they work with? What does the ML infrastructure look like today? What models are you building? What’s the production environment? What problem is this solving for the business? The more concrete and honest your description, the better candidates it attracts. Vague postings attract vague applicants. We see this play out over and over.

Judge people by what they’ve shipped

A strong GitHub portfolio tells you more than a Stanford diploma. Published work matters. Production deployment experience matters even more. We’ve placed engineers without PhDs who ran circles around research-pedigreed candidates because they’d actually built systems that real users depended on. Give candidates practical assessments that look like actual work. Stop asking whiteboard algorithm puzzles from 2015. Nobody is going to reverse a binary tree on your production floor.

Consider contract-to-hire

Contract and contract-to-hire models have picked up serious traction in AI hiring. They let you bring someone in for a specific project, evaluate how they actually perform on real work, and then convert to permanent if it’s a fit. No guesswork. Working with a specialized IT staffing partner makes this even smoother because you get access to pre-vetted candidates who can start contributing fast instead of spending weeks in a pipeline.

When Bringing in a Staffing Partner Makes Sense

Full transparency here. We are a staffing firm. So take this section with exactly that context. But we also know there are situations where doing this in-house works great and situations where it doesn’t. Being honest about the distinction is more useful than pretending everyone needs our help.

It generally makes sense to bring in a partner when you’re standing up AI capabilities for the first time and don’t have internal expertise to evaluate candidates properly. Or when you need a very niche skill, something like LLM fine-tuning or MLOps, and your Indeed postings are generating nothing useful. Or when the business timeline is tight and you need someone who’s already been vetted and can hit the ground running. Or when you’re trying to compete against Big Tech offers and you need someone who understands the market well enough to help you position.

A good staffing partner doesn’t just push resumes at you. They understand the technical landscape well enough to screen for real capability. They calibrate your expectations to the actual market. And they find people who care about things beyond raw compensation, because honestly, a lot of strong AI engineers are not interested in working at Amazon or Meta. They want interesting problems, autonomy, and a team that knows what it’s doing.

The Mistakes We See Over and Over

I could write a whole separate post just on this. But here are the ones that come up constantly.

The unicorn hunt. Looking for someone who’s an expert in NLP AND computer vision AND MLOps AND agentic AI AND cloud architecture. That person is a CTO making half a million dollars. They’re not applying to your mid-senior IC role. Pick the skills you actually need for the projects you’re actually doing. Hire for those.

Credential worship. PhDs absolutely matter for research positions. For production engineering? Some of the best people we’ve placed were self-taught or came through nontraditional paths. A bootcamp grad who’s deployed three production ML systems is more useful to most companies than a PhD candidate who’s never left the lab. Look at what people have built. Not where they went to school.

Forgetting about MLOps. This is the big one. Teams hire brilliant model builders and then wonder why nothing makes it to production. The model is 20% of the work. The infrastructure to deploy, monitor, retrain, and maintain it is the other 80%. Budget for MLOps from the start or your AI investment is a science project that never generates revenue.

Stale salary benchmarks. Using comp data from 2023 or even early 2024 is a guaranteed way to lose every candidate before the conversation starts. AI salaries moved faster than any other category in tech. Pull current data. Adjust your range. Or don’t bother posting the role.

Glacial interview processes. Five rounds spread over six weeks with two weeks of silence between each one. That tells a strong candidate everything they need to know about how your organization makes decisions. They draw exactly the conclusion you’d expect. And they accept the offer from the company that moved in twelve days.

Keeping Them After You Hire Them

Getting an AI engineer in the door is the hard part. Watching them leave six months later because you stuck them on maintenance work is the expensive part.

This field moves absurdly fast. If your engineers feel like they’re falling behind because the company won’t send them to a conference or give them time to learn new tools, they’ll leave. It’s not complicated. Anthropic reports 80% two-year retention partly because they invest in growth paths and internal mobility. You don’t need to be Anthropic. But you do need to show your people you take their development seriously.

Team composition matters too. You need a mix. People who push the technical boundaries and people who can deploy reliable systems that don’t break at 2 AM. Teams that only hire researchers produce impressive papers and nothing in production. Teams that only hire operators build stable systems that never improve. Get both in the room.

And the work has to be interesting. These people chose this career because they wanted to solve hard problems. Stick them on incremental tweaks to a dashboard nobody uses and they’ll be interviewing somewhere else within 90 days. Give them problems worth solving. Connect their daily work to something the business actually cares about. That’s retention.

Let’s Talk

The companies that are winning the AI hiring race in 2026 share three things. They move fast. They pitch compelling technical work, not just comp. And they understand the market well enough to make smart calls on role scoping, compensation, and engagement models.

We built our AI and ML staffing practice around understanding this stuff deeply. Our recruiters know the difference between an ML engineer and an MLOps engineer. They can evaluate real technical depth. And they work alongside your hiring team, not as a disconnected vendor.

Talk to a KORE1 recruiter today and get access to vetted AI engineers who are ready to make an impact on your AI initiatives.

Frequently Asked Questions

What is the average AI engineer salary in 2026?

It crossed $206,000 in 2025 and keeps climbing. Entry level sits between $120K and $150K. Mid-career runs $150K to $220K depending on specialization. Senior engineers hit $200K to $312K or higher. Specialists in deep learning, LLM work, and agentic AI push well above those ranges.

What’s the difference between an AI engineer and a machine learning engineer?

AI engineers work broadly across intelligent systems, bridging research and production. ML engineers go deeper on model architecture, data pipelines, and training infrastructure specifically. Tons of overlap. But ML engineers tend to live closer to the data and modeling side, while AI engineers take a wider view of how everything fits together in a product.

What skills should I prioritize when hiring AI engineers?

Python, deep learning frameworks like PyTorch or TensorFlow, solid ML fundamentals, and MLOps experience are the core. For 2026 specifically, LLM fine-tuning, retrieval-augmented generation, and agentic AI skills command the biggest premiums and are the hardest to find.

How long does it take to hire an AI engineer?

The market average has dropped to roughly 25 days. If your process takes longer than three weeks you’re probably losing top people to faster companies. A specialized staffing firm can compress that timeline by giving you access to candidates who’ve already been vetted.

When should I use an AI staffing agency instead of hiring directly?

When you’re building AI capabilities from scratch and lack internal expertise to evaluate talent. When you need niche skills that aren’t showing up in your applicant pool. When the timeline is tight and you need pre-vetted candidates fast. Or when you’re competing against Big Tech compensation and need market intel to make your offer competitive.

Is a PhD required to be an AI engineer?

For research scientist roles at AI labs, typically yes. For applied AI, ML engineering, and MLOps work, often no. We’ve placed many successful engineers without advanced degrees. Practical production experience frequently carries more weight than academic credentials for the roles most companies are actually trying to fill.