Data Engineer Interview Questions 2026: What They’ll Actually Ask You

Last updated: April 25, 2026

Data engineer interviews in 2026 lean on SQL window functions, idempotent pipeline design, dimensional modeling for siloed source systems, and at least one cloud stack (most often Snowflake plus Databricks on Azure or AWS), with growing weight on business-context reasoning over framework trivia. Most question banks online are still structured for 2022 hires. The interview you’re about to walk into has shifted. The technical bar is the same. The screening philosophy is not.

I’m Gregg Flecke. I run data engineering and analytics searches at KORE1, which means I sit on debrief calls after the interviews you don’t get to listen in on. That gives me a view of where candidates win and lose that’s different from anything you’ll find in a public question bank, because the deciding signal is rarely what the candidate said and almost always what the hiring manager said about them once the call ended. This guide is built off that view, plus the live searches I’m running right now for clients in private credit, healthcare IT, and SaaS who all want a data engineer who’s done it before, ideally on a stack they recognize, and ideally with at least one production story that ends in a 2am rollback they actually remember. If you’re studying for an interview this week, the rest of this is for you. If you want a sense of how KORE1 places data engineers, the data scientist and data engineer staffing page is the short version.

How a 2026 Data Engineer Loop Actually Looks

The shape of the loop matters. If you walk in expecting one technical screen and an HR call, you’ll be blindsided by the third hour of the onsite. Most loops in 2026 break into the following:

| Round | Format | What’s Really Being Tested |

|---|---|---|

| Recruiter screen | 25 to 30 min phone | Comp range, motivation, basic stack alignment |

| Hiring manager screen | 45 min | Resume drilldown, “tell me about a recent project,” culture read |

| Technical screen | 60 min live | Live SQL, sometimes Python data manipulation, occasional whiteboard |

| Take-home (optional) | 3 to 6 hours | Real-world pipeline build, often dbt or notebook based |

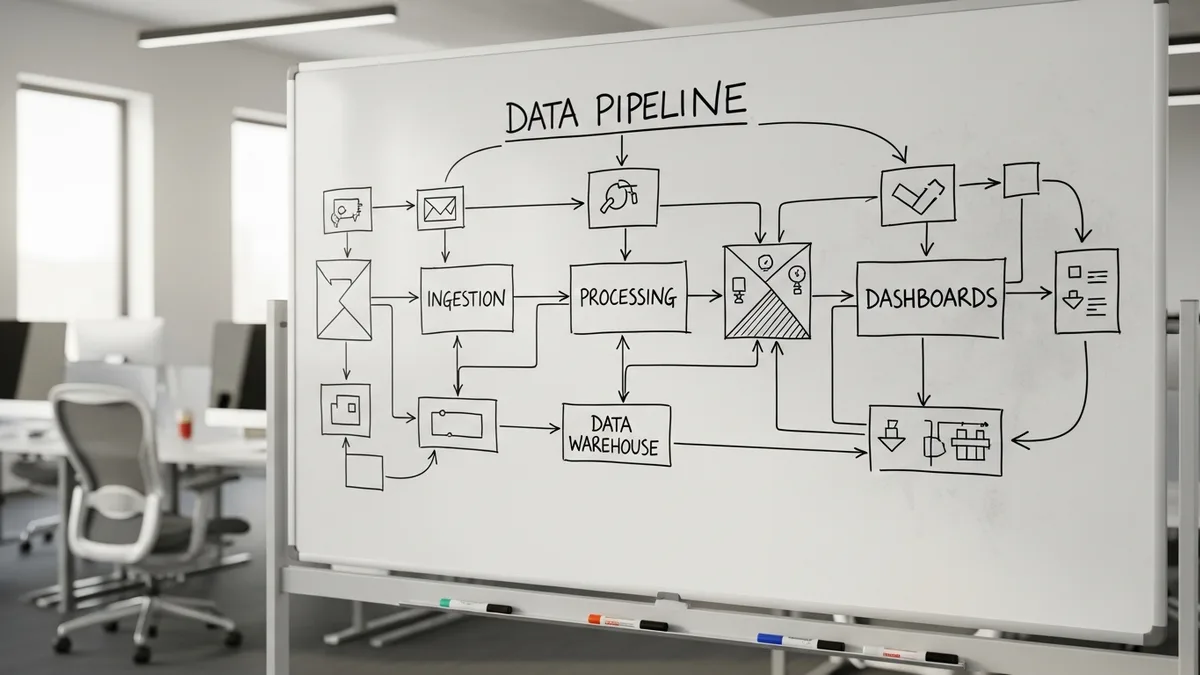

| System design | 60 to 75 min | Architect a pipeline from sources to dashboards, defend tradeoffs |

| Behavioral / cross-functional | 45 min | How you work with analysts, PMs, and skeptical stakeholders |

Five to seven rounds. Two to three weeks elapsed if the company is fast. Some companies have collapsed the recruiter and hiring manager screens into one call, and some have added a second technical round specifically for cloud stack questions, which is becoming more common at firms running active migrations between Snowflake and Databricks. The take-home is the most variable. Some candidates love it. Some refuse to do it. Both reactions are reasonable.

One framing point before the questions. The biggest 2026 shift I’ve seen on debriefs is that hiring managers want to see you reason about the business, not just the data, and that shift cuts across every vertical I’ve worked in this year, from private credit to healthcare IT to vertical SaaS. A senior data architect I’ve worked with on a recent private credit search put it bluntly to me on a debrief. “If you don’t understand the business and how the business operates, you cannot build a good data model, warehouse, lakehouse, anything.” He says that to candidates in the first ten minutes, makes a decision about whether they’re hireable somewhere around minute thirty, and runs the technical questions in the second half of the call as confirmation rather than discovery. Internalize that and the rest of this gets easier.

Why the Public Question Banks Don’t Match What’s Asked

Most of the lists you’ll find on the first page of Google were last meaningfully updated in 2022 or 2023, and they show their age in predictable ways. They overweight Hadoop, Hive, MapReduce, and on-prem Spark trivia that almost no cloud-native shop is asking about anymore, while underweighting Snowflake-specific behavior, Databricks workflow design, dbt mental models, and the kind of judgment questions that have started to dominate senior-level rounds across every search I’ve run in the past twelve months.

The actual questions skew toward four buckets:

- SQL at production volume, not toy-table SQL. Window functions, anti-joins, deduplication patterns, slowly changing dimensions.

- Pipeline reliability, framed as scenarios. What do you do when the upstream API silently changes a field type? When a backfill needs to rerun nine months of data without double-counting?

- Cloud-native specifics. Snowflake micro-partitions and clustering keys. Databricks photon vs classic compute. Azure Data Factory triggers vs Airflow.

- Modeling judgment. Why dimensional modeling still wins for analytical workloads even when the warehouse can technically handle one-big-table.

If you’ve been preparing from a 2022-vintage list, you’re answering a different exam than the one being given.

SQL Questions That Actually Get Asked

SQL is still the gate. Every senior data engineer interview I sit on the other side of starts here. The questions are not hard if you’ve used SQL in production for the past year. They are brutal if you haven’t.

“Find the second-highest salary per department, but break ties by hire date, oldest first.”

This sounds like a window function warmup. It is. The trap is in the tie break. Candidates who reach for RANK() get duplicates wrong, and DENSE_RANK() handles ties but doesn’t actually break them in the way the prompt is asking for. The right answer uses ROW_NUMBER() OVER (PARTITION BY department_id ORDER BY salary DESC, hire_date ASC) and filters where the row number equals 2. If you can’t write window functions fluently, drill them this week. They show up in some form on roughly 80% of the technical screens I see.

“You have a transactions table with 200 million rows and a customers table with 5 million. Some customers have no transactions. Write a query that returns each customer with their total transaction amount, treating no transactions as zero.”

Left join, sum, coalesce. The follow-up is the part to prepare for, because they’ll ask how you’d make it run faster, and the good answer covers indexing on the join key, partition pruning if the warehouse supports it, and pre-aggregating in a CTE before the join so the optimizer doesn’t have to scan the full transactions table for every customer row. The great answer mentions that on Snowflake you’d lean on the automatic micro-partition pruning and check the query profile to see whether the optimizer is doing it, while on Databricks you’d consider a broadcast join for the smaller customers table to avoid shuffling 5 million rows across the cluster.

“Walk me through how you’d deduplicate a slowly changing dimension table where source records sometimes update without changing the primary key.”

This is the one that separates candidates who’ve shipped pipelines from candidates who’ve only consumed them. The answer involves a hash of the relevant columns to detect changes, a window function to identify the latest version of each natural key, and a SCD Type 2 pattern with effective_from and effective_to columns that lets downstream queries reconstruct the state of any record at any historical point in time. Bonus points if you mention that MERGE in Snowflake or Delta Lake makes this less painful than it used to be.

“Here’s a query. Why is it slow?”

Open ended. They’ll show you something with a self-join, a function applied to a column in the WHERE clause that kills the index, or a subquery in the SELECT list that runs once per row in the outer query and explodes the cost into the millions. Talk through what you’d check first. Cardinality estimates. Whether predicates are pushed down. Whether the join order matches the cardinality, and whether a CTE is being recomputed each time it’s referenced rather than materialized once and reused. The wrong move is to start guessing before asking what the table sizes are. Always ask first.

Python and Pipeline Questions

The Python questions are usually pragmatic, not algorithmic. Nobody is asking you to invert a binary tree. They’re asking what they’d actually pay you to do.

“Walk me through how you’d build an idempotent pipeline that pulls from a paginated REST API, handles rate limits, and lands the data in the warehouse.”

The word that earns points is idempotent. Then explain how you’d get there. Pagination state stored externally so a restart picks up where it left off, exponential backoff with jitter on 429 responses, writes to a staging table keyed on a deterministic hash of the source record so reruns don’t double-count, and a final merge into the production table that uses the hash as the dedup signal. The candidates who skip the idempotency framing and dive into requests.get code lose points fast. Hiring managers want to know that you’ve been paged at 3am for a pipeline that ran twice and accidentally tripled a revenue number on the executive dashboard.

“The upstream API changes a field from a string to an object. Your pipeline starts failing. What do you do in the next hour?”

This is a real question. I’ve heard it asked verbatim three times this year. The answer they want goes roughly like this: stop the pipeline before bad data lands, look at the schema diff, decide whether to coerce, parse, or quarantine the new structure, and communicate with the team that owns the source system to confirm whether the change is intentional or an accident. If you can’t reach them in that window, write the pipeline to land the raw payload as a JSON column and project the typed columns downstream, which preserves the data without forcing a premature decision about the new schema. That last move is what senior candidates do. It’s also the move I’d recommend in nearly any ingestion pattern. Pull everything raw first, project later.

“How do you handle a backfill that needs to reprocess nine months of historical data without breaking the downstream BI dashboards while the backfill is running?”

The answer involves a parallel write path, which means either a shadow table that the backfill writes to before swapping in atomically once the rebuild is verified, or a partition strategy where the backfill targets specific historical partitions while the live pipeline keeps writing to current ones without any coordination needed between the two paths. The naive answer is “I’d run the backfill at night when nobody’s looking.” That answer tells the interviewer you’ve never owned a 24/7 pipeline.

“Tell me about late-arriving data and how you handle it.”

Late-arriving facts. Late-arriving dimensions. Both happen, and the solutions are different. The fact pattern is a transaction recorded yesterday that hits your warehouse three days later because of a partner’s batch schedule, while the dimension pattern is a customer who signs up today but whose CRM sync is delayed and whose transactions arrive referencing a customer ID your dimension table doesn’t yet have. The fixes diverge: watermarking and reprocessing windows for facts, deferred dimension lookups or late-binding joins for dimensions, and an explicit policy about what happens to records that arrive outside the watermark. If you’ve never thought about either, the question reveals it instantly.

Data Modeling Questions

Modeling is where the senior signal lives. A candidate who can write fast SQL but defaults to one-big-table for every analytical workload is a junior in a senior’s chair.

“Walk me through how you’d model a sales fact table for a company with five sales channels, three product hierarchies, and a customer base where the same person can be a buyer at one company and a decision-maker at another.”

Star schema. Fact table at the grain of the individual sale. Dimensions for date, customer, product, channel, and sales rep. The decision-maker-versus-buyer wrinkle is what they’re actually testing, because it forces you to explain how a single dimension can play more than one role on the same fact row without collapsing the two relationships into a confused mess. The right answer uses either a bridge table or a role-playing dimension where the customer dimension is referenced twice from the fact, once as buyer_id and once as decision_maker_id, with a different alias each time so analytical queries can distinguish between them cleanly. Candidates who try to flatten this into a single denormalized table struggle to explain how to query it without confusing the two relationships.

“What’s the right grain for this fact table?”

Asked about something specific they’ll describe. The answer: the grain is whatever question you most often need to answer, and it should be set at the lowest level that the foreseeable analytical use cases will need to drill into. If the dashboard asks “revenue by sales rep by week,” the grain is at minimum (sales_rep, week). If you might later want to drill into individual deals, the grain is the deal. The tradeoff is storage and query speed. Lower grain costs more space and runs slower on aggregations, while higher grain loses the detail you can never get back without rebuilding the table. Candidates who can articulate the tradeoff get credit. Candidates who pick a grain without explaining why don’t.

“When would you use a slowly changing dimension type 2 versus type 1 versus type 6?”

Type 1 overwrites the old value. Use it when history doesn’t matter. Type 2 adds a new row with effective dates and is the workhorse pattern, useful when downstream analysis needs to know what was true at a point in time, which covers most analytical use cases involving customer attributes, employee attributes, or pricing changes that the business needs to see in historical context. Type 6 combines current value and full history in the same row, and you reach for it when reports frequently need both perspectives without forcing analysts to write subqueries against the type-2 history. Most candidates know type 1 and type 2. Type 6 is a useful credibility flag. If you don’t know it, don’t bluff. Say you’d reach for type 2 and adapt if needed.

System Design: The Round That Decides It

The system design round is where senior candidates either make it or don’t. The format varies. The structure doesn’t.

You’ll be given a vague prompt. “Design a data platform for a B2B SaaS company that needs operational analytics on customer usage.” Or “Walk me through how you’d build the data pipeline for a private credit fund that needs to reconcile borrower data across three siloed systems.” Or “Imagine you’re hired to build the warehouse for a healthcare claims processor with HIPAA constraints.”

The wrong move is to start drawing boxes. The right move is to ask questions for the first ten minutes.

- What are the source systems? How many? What’s the volume per day?

- What latency does the business need? Real-time, near-real-time, daily, weekly?

- Who consumes the output? Analysts, executives, automated systems, customers?

- What’s the regulatory environment? HIPAA, GDPR, ILPA, SOC 2?

- Is there an existing warehouse or are we greenfield?

- What’s the budget posture, fast and expensive or slow and cheap?

Only after that should you start sketching architecture. Source systems on the left. Ingestion layer next. Storage in the middle, with a clear answer about why Snowflake or Databricks or BigQuery and not the other two. Transformation layer with dbt or native SQL. Serving layer for BI and downstream apps.

The questions they’ll dig into:

- Why this storage layer? What did you rule out and why?

- How does your design handle backfill?

- What breaks if a source system changes its schema?

- How do you monitor data quality? Where do alerts go?

- What’s the cost story at one year, three years, ten years of data?

The senior candidates I see win this round are the ones who push back on the prompt with specific, technically grounded objections rather than general resistance. “You said real-time. How real-time? Sub-second is a different system than five-minute lag.” “You said Snowflake. Have you measured your query patterns? Because if you’re doing heavy unstructured work, the cost story shifts and Databricks may end up cheaper at the volumes you’re describing.” Pushback that’s grounded in real tradeoffs reads as senior, while pushback that’s defensive or vague reads as junior, and the difference between the two is almost always in the specificity of the technical detail the candidate brings to the objection.

Cloud Stack Questions: Snowflake, Databricks, Azure

If the job description mentions a specific stack, expect at least 20 minutes of stack-specific questions. The deepest version of this round happens when the company is partway through a migration and wants to know if you can help them finish.

Snowflake-specific:

- What’s a micro-partition, and why does it matter for query performance? How do you tell if your clustering key is doing its job?

- When would you use a transient table versus a permanent table? What’s the time travel implication of each?

- You have a virtual warehouse running at high cost. Walk me through how you’d diagnose whether it’s right-sized.

- What’s the difference between a Snowflake stream and a task, and when would you use each?

Databricks-specific:

- What is the medallion architecture, and where does it break down at scale?

- Delta Lake handles ACID transactions on object storage. How? What problem does it actually solve?

- When would you reach for Databricks SQL warehouses versus all-purpose clusters?

- Photon engine. What is it doing differently and when does it matter?

Azure-specific:

- Walk me through the difference between Azure Data Factory, Synapse, and Fabric. Which would you pick for a new project today?

- How do you handle managed identity and key vault integration for a pipeline running on Azure Functions?

- If you’re landing data in ADLS Gen2 and querying with Synapse, what’s the file format conversation? Parquet vs Delta vs Iceberg?

One important note. If the job description mentions a stack you don’t know cold, don’t panic. The hiring managers I work with would rather hire a strong data engineer who is slightly behind on a specific stack than a candidate who has the right keywords on the resume but can’t reason about why one tool wins over another in the context of an actual production decision. Be honest about what you’ve used and what you’d need to ramp on. Pretending shows up immediately and tanks the loop.

Behavioral and Business-Context Questions

The behavioral round is rarely the round that gets you the offer, but it is often the round that loses it for you. Three patterns dominate in 2026.

“Tell me about a time you had to reconcile data across systems that didn’t agree.”

Asked in some form on roughly every search I run. The right answer has specifics. Two ERP systems with conflicting customer hierarchies. A CRM and a billing platform with mismatched IDs after an acquisition. Whatever your version is, name the systems. Name the size of the discrepancy. Name what you did. The candidates who answer in vague generalities (“we built a reconciliation process”) lose, while the ones who say “we found 4,200 customers had different parent_company values across Salesforce and NetSuite, I built a dbt model that flagged the conflicts, and we walked through them weekly with the ops team until we cleared the backlog over about six weeks” win because the level of detail signals real ownership rather than secondhand knowledge.

“Tell me about a stakeholder who didn’t agree with your data.”

Not whether stakeholders argue with data. Whether you handled the argument well. The strong answer involves taking the stakeholder seriously, investigating their numbers, and either explaining why your data is right or admitting where it was wrong, all without making the stakeholder feel like they wasted your time by raising the question in the first place. Defensive answers fail. So do answers where the candidate “convinced” the stakeholder. The good outcome is alignment, not victory.

“How do you explain a complex data problem to a non-technical executive?”

This is the question that architect cares about most. Test it on a friend before the interview. Pick something genuinely complex. Late-arriving data. The reason a star schema beats a flat table. Why your warehouse cost spiked 40% last month. Practice explaining it without using the words “schema,” “join,” or “denormalize,” because the executive you’re describing this to in a real room won’t know what those words mean and will tune out the moment you reach for them. If you can’t, you’re going to lose this round to a candidate who can.

What Hiring Managers Are Quietly Testing For

From the debrief calls I sit on, three signals dominate.

Business-model literacy. Can you explain what the business does and why the data work matters? Candidates who answer “I built pipelines from sources to a warehouse” miss the point, because the hiring manager wants to know whether you understood the business well enough to make architectural decisions that fit it rather than just executing on a spec someone else handed you. The private credit firm I mentioned earlier rejected three candidates who couldn’t explain what an invoice factoring business actually does after a 30-minute conversation. The fourth candidate they interviewed walked them through the cash conversion cycle on a whiteboard. He got the offer.

Pragmatism over tool chasing. That architect has a saying I’ve borrowed. “If you don’t need it, don’t build it.” Candidates who recommend a Databricks lakehouse for a 50-gigabyte workload lose, while candidates who recommend a star schema because it fits the actual queries the business runs against the actual data they actually have win, and the pattern repeats almost mechanically across every senior search I see. The hiring manager wants someone who picks tools to fit problems. Not someone who pattern-matches problems to tools they already know.

Working session quality. Some companies skip the take-home and replace it with a 90-minute working session, and they give you a real problem from their backlog along with whatever data they already have, then watch how you ask questions, how you push back, and what you actually build in front of them. The candidates who treat it like a test fail. The candidates who treat it like the first day of the job often get the offer. There’s a meaningful difference between the two postures.

Red Flags to Avoid

A short list of things I see candidates do that tank loops they would otherwise have won.

Recommending Databricks because it’s on the resume. Tools chase problems, not the other way around. If the volume doesn’t justify a lakehouse, don’t pitch one.

Skipping the question phase in system design. The first ten minutes of a system design round should be questions, not architecture. Candidates who skip to drawing lose points instantly.

Bluffing on a stack. Hiring managers can tell. Always. The recovery move is to say “I haven’t used this in production but here’s how I’d think about it.” That recovers credibility. Bluffing destroys it.

No production stories. A senior data engineer has been paged. Has done a 2am rollback. Has mis-typed a where clause and updated 40,000 rows incorrectly, and has spent the next four hours rebuilding the table from a backup while explaining what happened to a stakeholder who is not in a forgiving mood. If you have four years of experience and no specific incident comes to mind, the interviewer concludes either the experience isn’t real or you weren’t actually owning the systems. Both are bad reads.

Treating SQL as a junior topic. Senior candidates who breeze through SQL questions get hired. Senior candidates who treat SQL questions as beneath them get rejected. The interview isn’t testing what you know. It’s testing whether you stayed sharp on fundamentals after you got senior.

How to Prepare in Two Weeks

If you have an interview in two weeks and need a triage plan, this is what I tell candidates I’m submitting.

Days 1 to 3. Drill SQL window functions until you can write them without thinking. Practice on a real dataset. Stack Overflow’s public data dumps are free, large enough to expose performance differences between approaches, and structurally similar to the kinds of analytical questions you’ll be asked in a technical screen. Write the same query three ways and EXPLAIN each one.

Days 4 to 6. Build a small ingestion pipeline from a public API to a free Snowflake or Databricks tier, make it idempotent, make it handle pagination and retries with backoff, and introduce a schema change on purpose to see how your error handling reacts when the input structure shifts under it. The exercise itself becomes a story you can tell in the behavioral round.

Days 7 to 9. Read the Kimball Data Warehouse Toolkit chapters on dimensional modeling and slowly changing dimensions, which are old enough to have been written before most modern warehouses existed but accurate enough that the patterns still hold up against Snowflake, Databricks, and BigQuery without meaningful adjustment. Then practice modeling three real businesses you know well, on paper. A coffee shop. A B2B SaaS. A lending business.

Days 10 to 12. Practice system design out loud, by yourself or with a friend. Pick a prompt. Set a timer for 60 minutes. Talk through the questions you’d ask, the architecture you’d draw, and the tradeoffs you’d make at each layer of the stack. Record yourself. Listen to it. The first time will be rough.

Days 13 to 14. Review your own production stories and pick three: one technical win where the design choice paid off, one technical failure with a clean story about what you learned and what you changed afterward, and one cross-functional story where you had to convince a skeptical stakeholder of something the data was telling you. Rehearse them out loud until they sound natural without sounding rehearsed. The behavioral round is where most candidates underprepare.

Two weeks is enough if you actually do the work. It’s not enough if you watch tutorials.

Common Questions Candidates Ask Me Before the Interview

How important is it to know both Snowflake and Databricks?

Most senior data engineer roles in 2026 ask for one in production and familiarity with the other.

The split is roughly 60/40 toward Snowflake-primary shops in the searches I run, with the remainder Databricks-first or BigQuery-first depending on the vertical. If you’re deep on Snowflake, learning Databricks at a conceptual level (what is the medallion architecture, how does Delta Lake work, what’s Unity Catalog for, when do you reach for a SQL warehouse versus an all-purpose cluster) is enough to hold your own in most loops without overcommitting to expertise you don’t have. If the role specifically calls out a lakehouse architecture or heavy ML workloads, hands-on Databricks experience matters more. Don’t try to fake it. Say what you’ve used and what you’d ramp on.

Do I really need to know Python, or is SQL enough?

Python is required for senior data engineer roles in 2026. SQL alone caps you at junior-to-mid.

The Python bar isn’t algorithmic. It’s pragmatic. Read a JSON response, transform it, write it to S3 or a warehouse, handle errors gracefully without leaking secrets in a stack trace. If you can’t write that in 20 minutes without reaching for documentation, drill it. The 2025 Stack Overflow Developer Survey put Python at the top of professional data engineer language usage by a wide margin, and that hasn’t changed in 2026. Stack Overflow’s 2025 Developer Survey has the breakdown if you want the numbers.

Are take-home assignments still worth doing?

Yes for most roles, with one exception: take-homes that require more than six hours.

A four-hour take-home that asks you to build a small pipeline is a fair test of how you actually work. A 20-hour take-home is a free consulting gig dressed up as an interview, and it’s reasonable to push back on scope when the assignment is clearly larger than what would give the hiring manager a good signal in a reasonable amount of time. The phrasing I’d suggest: “I’d love to do this. Can we scope it to the four hours that would give you the strongest signal? I want to give you my best work.” Hiring managers respect that. The ones who don’t are telling you something about how they treat employees.

What if my SQL is rusty?

Two weeks of focused drilling closes the gap for most candidates with prior production experience.

SQL fluency degrades fast when you stop using it daily, but the good news is that it comes back fast too if you put in deliberate practice for two consecutive weeks instead of trying to cram everything into the night before the interview. Pick a free interview SQL practice site. Solve a problem a day. Make sure window functions, anti-joins, and grouped aggregations are second nature before you walk in. The hiring manager isn’t testing whether you can write SQL with a documentation tab open. They’re testing whether you can think in it.

Should I bring code samples or a portfolio?

A small, well-documented GitHub project beats a long resume.

One good repo with a clear README, sensible commit history, and a real (or realistic) dataset is more credible than three years of bullet points on a resume. Build it before the interview if you don’t have one. A simple dbt project on a public dataset, or the idempotent API ingestion pipeline I described above, is enough to give the hiring manager something concrete to look at and ask questions about during the technical round. Hiring managers will look at it. Even when they don’t, you’ll reference it in the behavioral round and have a real example to point to.

How long do data engineer searches usually take?

Two to four weeks from first conversation to offer for most senior roles working with a specialized recruiter.

KORE1’s average IT time-to-hire is 17 days across all roles, and senior data engineering searches with a specific stack requirement, meaning Snowflake plus Databricks plus Python plus modeling fluency plus at least some exposure to dbt, tend to run a little longer in the 22 to 28 day range because the qualifying criteria stack narrows the available candidate pool. The variable is the candidate’s calendar more often than the client’s process. If you’re actively interviewing elsewhere, tell the recruiter early. Competing offers move the timeline. The data engineer salary guide covers comp benchmarks for the bands you’re likely negotiating in. If you’re a hiring manager reading this, the complete data engineer hiring guide walks the same loop from the other side.

Are interviewers really still asking about Hadoop and MapReduce?

Almost never in 2026. If they are, treat it as a signal about the company’s tech debt.

The exception is companies still operating large legacy on-prem clusters they’re migrating from, where the data engineering team needs at least some institutional knowledge of how the old system actually works in order to plan the migration without breaking the dashboards that the executive team has been looking at for the past five years. If a job description mentions Hadoop, the interview will probably ask about it, but as part of a conversation about migration rather than as a current technology evaluation. Most cloud-native companies stopped asking these questions around 2022. If a recruiter at a SaaS company asks you about MapReduce in 2026, ask them what business problem you’d be solving with it, and their answer will tell you whether it’s a real role or a poorly maintained job description.

The Short Version

Be fluent in SQL. Be honest about Python. Pick stacks based on problems, not the other way around. Have specific stories about pipelines that broke and what you did about it. Ask questions before you draw architecture. Explain things in plain English. Don’t bluff a stack you haven’t shipped on. Don’t treat fundamentals as beneath you because you’re senior.

If you want to talk through your search, or you want a sense of what KORE1 is seeing in the data engineering market right now across the verticals where we run active searches, reach out to our team. We work both sides of the table, candidate and hiring manager, and we can usually tell within a 20-minute call whether your background lines up with searches we’re running or partners we know are hiring at the seniority and comp range you’re targeting. If you want context on adjacent roles, the breakdown of data engineer vs data scientist responsibilities is worth a read before you commit to one path or the other.