SRE (Site Reliability Engineer) Job Description Template 2026

Last updated: May 14, 2026

A site reliability engineer keeps production running, owns the error budget that decides how often features can ship, and turns operational toil into automation, with 2026 U.S. base salaries running $140,000 at mid-level to $260,000 for principal SREs at infrastructure-heavy companies. Most postings sitting open 70-plus days are actually three different SRE archetypes mashed into one bullet list. The template below is the JD shape we see close inside 30 days when hiring managers commit to one shape and post the comp band.

Our IT desk gets a steady drip of SRE intake calls that all start the same. The platform team has been on call too long. Pages spike on Tuesday afternoons. The CTO finally gets quoted an availability number in a board deck and decides the company needs an SRE function. By the third call we are not talking about reliability anymore. We are talking about why the requisition has been open since February.

The recruiting problem is downstream. The JD describes a Kubernetes generalist who also runs incident command, also writes Terraform modules, also owns observability for thirty services, also designs chaos engineering experiments, and also reports up through engineering while embedded in product squads. No single human matches that shape. The pool of candidates who would have matched some of it gets confused, applies anyway, and the loop spends six weeks discovering the JD did not mean what it said.

Mike Carter, KORE1. I run the workforce and revenue side here, not the engineering bench. I do not write Terraform. I do read SRE intake calls back to clients before we open the search, and I have watched the same three patterns turn 30-day searches into 90-day ones. KORE1 collects a placement fee on the hire, so weight the recommendation accordingly. The JD template runs the same whether you partner with a staffing firm or post the role yourself.

Three Archetypes the Title Hides

Site reliability engineer in 2026 collapses three distinct roles into one title: platform SRE, product SRE, and customer reliability engineer. Each lives on a different team, screens for a different skill set, and lands in a different comp band roughly $30,000 to $50,000 apart.

Splitting the title is not pedantry. It is the difference between filling in 35 days and rebuilding the JD twice in three months.

Platform SRE. The most common shape and the one most hiring managers picture when they say “we need an SRE.” Owns the underlying infrastructure that every product team runs on. Kubernetes clusters, the service mesh, the observability stack, the deploy tooling, the IaC that provisions all of it. Sits next to the platform engineering team or inside it, depending on how the org evolved over the past four or five years and which leader happened to win the political fight over who owns shared infrastructure. Has been paged at 3 a.m. for a control-plane upgrade that went sideways and can describe in painful detail how the rollback unwound. Knows Prometheus deeply enough to argue with you about cardinality. Has opinions about Istio versus Linkerd that are tied to a real production incident, not a Hacker News thread from 2022. Mid-level platform SREs run $145,000 to $175,000. Senior runs $175,000 to $215,000 in non-coastal metros, $200,000 to $260,000 on the coasts. Principal pushes higher at companies where the platform is a real engineering surface, not a cost center to be quietly underfunded. Certifications matter less here than for solutions architect, but the Certified Kubernetes Administrator credential is a useful screener at the mid-level. Hands-on Terraform module work is the better signal at senior.

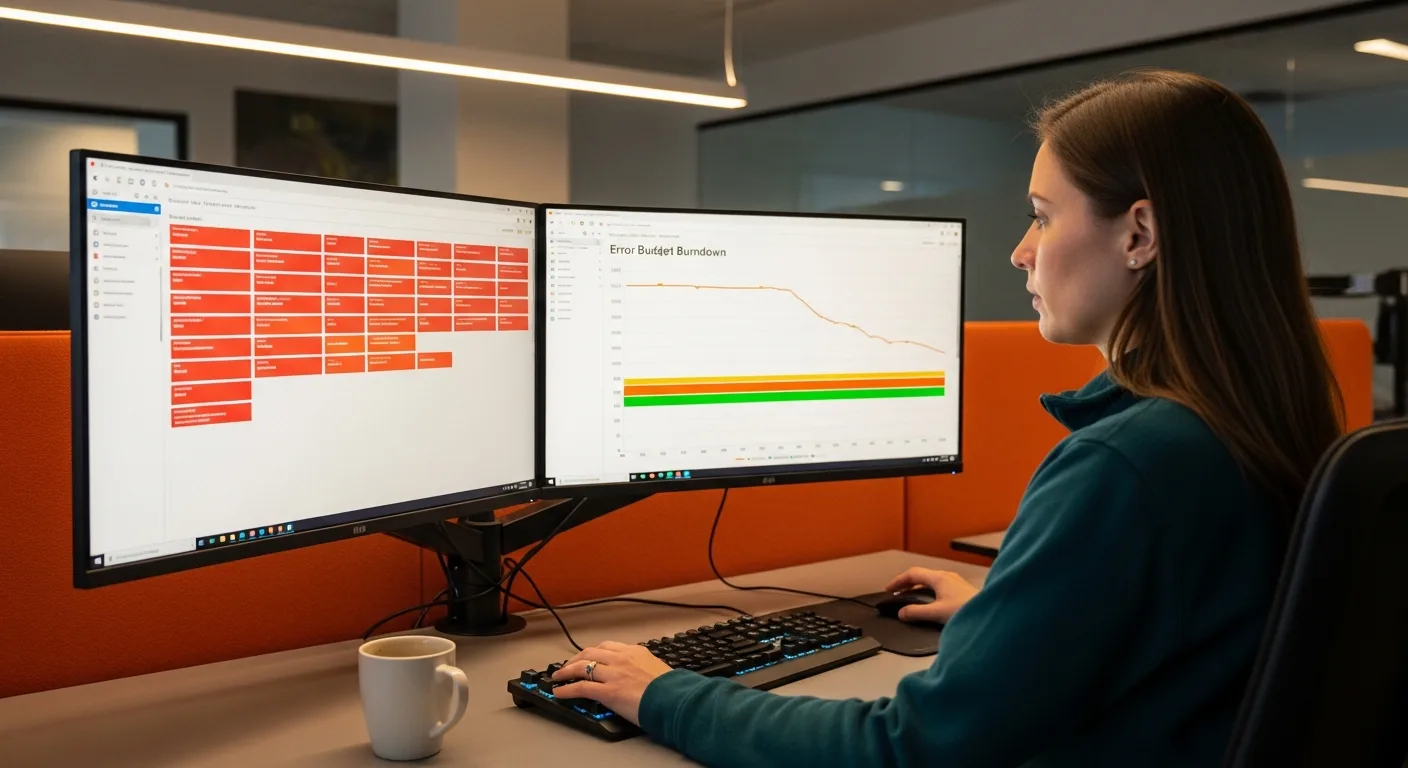

Product SRE. Different team, different rhythm. Embedded inside a product squad of seven to twelve engineers. Owns reliability for that team’s services specifically. Builds the SLIs, the SLOs, and the runbooks for the surfaces the team ships. Runs the post-mortems when those surfaces break. The work is half engineering and half organizational. A great product SRE will spend Tuesday morning writing a controller in Go and Tuesday afternoon convincing the product manager that the next quarter’s roadmap needs to include a 20% reliability investment because the error budget burned through in three weeks and the team is now in a feature freeze that nobody had socialized to leadership before the all-hands. The blend reads simple on paper. In practice the candidate pool is smaller than for platform SRE because most strong infrastructure engineers do not want to attend product sprint planning, and most product engineers do not want to be on call. Comp lands $135,000 to $200,000 for mid through senior. The roles that close fast post the on-call expectations up front. The roles that drag bury them.

Customer Reliability Engineer. Borrowed from Google’s CRE practice and showing up at more enterprise SaaS companies every year. Sits closer to the customer success or solutions engineering function than to platform. Works with strategic accounts on their reliability practices, runs joint incident reviews, and helps the customer build SLOs against the SaaS product. The technical depth is real, but the role lives or dies on customer communication. Base pulls $150,000 to $200,000 with an OTE bonus tied to account retention or expansion that pushes total comp into the mid $200s for senior. Misposting this as a generic “SRE” pulls infrastructure-only candidates who freeze in a customer call. Misposting it as a “solutions engineer” pulls candidates who never wrote a runbook.

Pick the archetype. Write the JD for that shape. The version of this role that closes inside our 30-day median is the one where the hiring manager can answer, in a single sentence, which team the SRE will sit on and which services they will own in the first 90 days.

| SRE Type | Mid-Level | Senior | Principal / Staff | Reports Through |

|---|---|---|---|---|

| Platform SRE | $145K-$175K | $175K-$220K | $220K-$280K | Infrastructure / Platform Eng |

| Product SRE | $135K-$170K | $165K-$210K | $205K-$260K | Engineering / Product |

| Customer Reliability Engineer | $140K-$170K + OTE | $170K-$210K + OTE | $200K-$250K + OTE | Customer Success / Revenue |

Sources: ZipRecruiter (April 2026), Glassdoor (2026), Levels.fyi (2026), KORE1 internal placement data 2025-2026. 25th to 75th percentile. Coastal metros (SF, NYC, Seattle, Boston) add 15-25%. Fintech, healthcare IT, and high-volume e-commerce verticals add 10-20%.

The SRE Job Description Template

Copy what fits. Cut what does not. The brackets are placeholders for your actual stack, your actual systems, your actual on-call rotation. The parentheticals are notes to the person writing the post and should never appear on the live listing.

Job Title

[Site Reliability Engineer (Platform) / Site Reliability Engineer (Product) / Customer Reliability Engineer]

(The qualifier in parens is the most useful word in the title. Skip it and the wrong half of the candidate pool applies. Add it and the JD does half the screening for you.)

About the Role

(Three sentences. What does this person keep alive? Who do they sit with? Is the role remote, hybrid, or onsite? Skip the company mission paragraph. Resume readers scroll past it.)

[Company Name] is hiring a [SRE archetype] to own reliability for [specific scope: our Kubernetes platform running 80 microservices / the payments product that processes 4M daily transactions / the multi-tenant SaaS surface that serves our top 40 accounts]. You will partner with [platform engineering / a product squad / customer success and solutions engineering] and report to [Director of Platform / VP Engineering / Head of SRE]. The role is [remote in the U.S. / hybrid in {city} / onsite in {city}] with [primary / secondary / shared] participation in a [follow-the-sun / U.S.-only / rotational] on-call rotation.

What You Will Own

(Six specific responsibilities. Each one should describe something this SRE would do in the first 90 days. Avoid the generic “ensure reliability” line. Name the SLOs, name the runbooks, name the alerts you want them to clean up.)

- Own SLOs and error budgets for [specific services or products], including the monthly review with engineering and product leadership where budget burn drives roadmap calls

- Lead incident response for [team or product scope], including command rotation, post-mortem authorship, and the action-item follow-through that turns each incident into one less of the same kind

- Build and maintain the observability stack for your scope, using [Prometheus / Grafana / Datadog / Honeycomb / New Relic / specific tools you actually run], with a focus on signal-to-noise and alert fatigue reduction

- Write infrastructure-as-code in [Terraform / Pulumi / Crossplane], including modules other teams reuse, with state management practices that the rest of platform can audit

- Automate toil ruthlessly, targeting a [50% / 40% / quantified] reduction in manual operational work across [scope] within the first two quarters, measured against the toil inventory you will inherit and refine

- Partner with [security / compliance / data platform / product] on cross-team reliability initiatives, including chaos exercises, dependency mapping, and the readiness reviews that gate launches above a defined risk tier

What You Bring

(Required and preferred, split clearly. Pad the required list and the pool shrinks. The candidates who would have been excellent self-select out the moment they fail a bullet that did not actually need to be there.)

Required:

- [4-6 / 6-8 / 8-12] years of production infrastructure or software engineering experience with at least [number] years in an SRE, platform, or DevOps-leaning role

- Hands-on production Kubernetes experience, including [cluster lifecycle / multi-cluster / multi-tenant] design choices and the operational scars that come with at least one cluster you helped recover from a bad state

- Strong scripting and software skills in [Go / Python / Rust], evidenced by tooling or controllers you can describe in interview, not just shell glue

- Production experience with [Terraform / Pulumi / CloudFormation] including module design, state isolation, and pipeline integration

- Observability fluency in [Prometheus, Grafana, OpenTelemetry / Datadog / Honeycomb / Splunk] including dashboard design, alert tuning, and the discipline to retire alerts no one acts on

- Real experience with SLI definition, SLO setting, and error budget conversations, including at least one budget burn that led to a roadmap change you can describe

- Comfort with on-call. Specifically, comfort with a [primary / rotating] page schedule that includes [overnight / weekend] coverage as part of the role

Preferred:

- Certified Kubernetes Administrator (CKA) or Certified Kubernetes Application Developer (CKAD)

- Cloud provider certification at the professional tier: AWS DevOps Engineer Professional, GCP Professional Cloud DevOps Engineer, or Azure DevOps Engineer Expert

- Experience with chaos engineering tools (Gremlin, Chaos Mesh, LitmusChaos) and the practice of running scheduled failure exercises

- Experience with service mesh (Istio, Linkerd, Consul) at production scale

- Vertical experience in [your industry: fintech, healthcare IT, e-commerce, ad-tech, gaming, biotech]

- Background in security engineering, especially around supply-chain and runtime container security

Compensation and Benefits

(Post the range. Not optional. Twelve states and a growing number of cities now require it. Postings without ranges fill slower across our IT desk by roughly two weeks. A $40K band is fine and signals seniority without locking you into a number.)

- Base salary: $[X] – $[Y]

- [Additional components: equity grant, signing bonus, on-call differential, performance bonus]

- [Three or four benefits that actually matter: healthcare details, 401k match, PTO model, remote stipend, learning and development budget]

About [Company Name]

(Two or three sentences. Product, stage, team size, why a senior SRE should care. Skip the boilerplate that begins with “We are a fast-growing.”)

Where Most SRE JDs Lose the Right Candidates

Four mistakes show up in maybe three quarters of the SRE postings we benchmark on intake calls. None are subtle. All are fixable in one editing pass.

Asking for every cloud and every orchestrator. “Expert in AWS, GCP, and Azure. Strong knowledge of Kubernetes, Nomad, and ECS.” That candidate works at a vendor, not at your company. Real SREs go deep on one cloud. They pick up a second through migration projects. The very small population that has actually operated all three at production scale are either consulting independently at $300 an hour, running platform engineering at companies that scoped the requisition properly before HR opened it, or no longer on the market at any price you have approval to pay. Three-cloud-expert bullets signal a JD that nobody on the engineering bench reviewed. Pick the cloud that runs production. Add the second as preferred only if you have an active migration that the new hire will own.

The on-call line buried in the benefits section. If the SRE will carry a primary pager 25% of weeks, say so in the second paragraph. Burying on-call expectations until late-stage interview costs offers. We had a search last quarter where the candidate made it to the final round before learning the rotation was follow-the-sun with a primary slot every third week, a detail that had been buried under a paragraph about wellness benefits and unlimited PTO. The offer signed elsewhere two days later. Time-to-hire on the rotational role we eventually closed dropped from 11 weeks to 33 days the moment the client moved the on-call disclosure into the JD’s first 200 words.

Confusing SRE with DevOps with no qualifier. Posting “SRE / DevOps Engineer” in the title is the fastest way to attract candidates from both pools who all then ask the same first-interview question: which one is this actually. SRE and DevOps overlap, but the practices are not synonyms. SRE leans on SLO-driven reliability work, error budgets, and the production discipline that came out of Google’s playbook. DevOps leans on CI/CD pipelines, deployment automation, and developer enablement. A candidate happy in one is often miserable in the other. If you genuinely need both, hire both. If you need one, name it. We have a deeper write-up in our DevOps engineer JD template for the other side of that line.

Vague responsibilities that read like a vendor brochure. “Ensure 99.99% uptime.” “Drive operational excellence.” “Champion best practices.” Senior SREs scroll past those bullets. They want specifics. What do you run? What is the alert volume on a normal day? How mature is the observability stack, really? What does coverage feel like on a holiday weekend, or on a Tuesday night when the cluster takes a bad upgrade and the on-call has to decide whether to roll back during a deploy freeze? Name the services. Name the alert volume. Name the SLO you have not yet figured out how to measure. The candidates worth hiring will read those specifics and self-screen with more accuracy than your recruiter ever could.

Tools and Platforms to Name By Name

Generic SRE skills lists pull generic resumes. Name the tools you actually run. The candidates you want already match the production environment they are leaving. The ones who do not match self-select out, which is the goal.

Container orchestration. Kubernetes is the default. Name the managed service if you use one: EKS, GKE, or AKS. Mention if you run any clusters bare-metal, via Rancher, or on a Tanzu or OpenShift distribution that comes with its own operational quirks and license model that the new SRE will inherit on day one. If you still run on Nomad or DC/OS, or have a legacy ECS footprint that nobody has had the budget to migrate, say so plainly. SREs ask early because their experience does not transfer one-to-one across orchestrators.

Infrastructure-as-code. Terraform is the dominant choice in 2026. Pulumi has meaningful adoption. CloudFormation appears in AWS-native shops that resisted Terraform. Crossplane is rare but worth naming if you run it because it filters for a specific candidate. Listing “infrastructure-as-code experience” without naming the tool reads as a hiring manager who has not asked their senior engineers what they actually use.

Observability. Prometheus and Grafana remain the open-source spine for self-hosted shops. Datadog dominates the managed-SaaS side for mid-market and up. Honeycomb owns the high-cardinality tracing niche. Splunk persists in enterprise security-adjacent stacks. New Relic still appears at companies that locked in early. OpenTelemetry adoption is finally where the conference talks said it would be three years ago. Naming the platform is screener-level information that the strongest SREs use to decide whether to apply.

Incident response. PagerDuty is the default. Opsgenie persists in Atlassian-heavy shops. FireHydrant, incident.io, and Rootly have meaningful market share at companies that took incident management seriously enough to buy purpose-built tooling. If you still run on a Slack channel and a Google Doc, name that too. Some SREs prefer it. Most do not.

Service mesh and CI/CD. Istio, Linkerd, and Consul Connect. ArgoCD and Flux on the GitOps side. Spinnaker at large enterprises with legacy investments. Naming what you run signals that the platform is mature enough to have made the choice deliberately.

SRE Salary Benchmarks 2026

Four sources, real spread. The aggregator data underweights the high end because Levels.fyi-tier comp at FAANG and FAANG-adjacent companies does not always end up in public surveys. KORE1’s placement data sits at the senior end because that is where most of our IT desk volume lives. The Bureau of Labor Statistics groups SRE work under a broader Software Developer category, which dilutes upward toward the median for all developers rather than the specialty.

| Source | Average / Median | Range (25th-75th) | Notes |

|---|---|---|---|

| ZipRecruiter (Apr 2026) | $138,910 | $109,500-$166,500 | Mixes junior through senior |

| Glassdoor (2026) | $167,400 | $133,000-$208,000 | Self-reported, skews mid-to-senior |

| Levels.fyi (2026) | $215,000 (median TC) | $170,000-$310,000 | Total comp, FAANG-heavy sample |

| KORE1 Placements (2025-2026) | $182,000 (median base) | $155,000-$220,000 | Senior-skewed, 30+ U.S. metros |

The Bureau of Labor Statistics projects 15% growth for software developer roles through 2034, with 129,200 annual openings. Site reliability sits inside that category. The qualified pool is not keeping pace. The senior SRE bench specifically is thinner than three years ago. The 2023 and 2024 layoffs at infrastructure-heavy companies pushed a meaningful slice of mid-career reliability engineers into adjacent platform-engineering and staff-engineering tracks that pay similarly without the rotational pager, and without the political tax of asking a product VP to slow a launch because the error budget burned through last week.

Geography moves the comp band more than most non-FAANG hiring managers expect. A senior platform SRE role in Costa Mesa at $185,000 base will compete head-to-head with the same role in Seattle at $215,000 and San Francisco at $230,000 base. The candidates running that comparison do it on their own laptop before they reply to a recruiter outreach. Our salary benchmark tool covers metro-level adjustments for SRE roles. Coastal premiums run 15% to 25%. Midwest metros run 10% to 18% below the coastal benchmark. Atlanta, Austin, and Denver run roughly 6% to 10% below.

Certifications That Move the Needle

Certifications matter less for SRE hiring than for solutions architect or cloud architect roles. The signal is real but it sits below production scar tissue. Here is what each credential actually tells you when it appears on a resume.

Certified Kubernetes Administrator (CKA). The most useful credential at the mid-level. Demonstrates the candidate has spent the time to learn cluster operations end-to-end: pod scheduling, RBAC, networking, troubleshooting failed deployments, and the etcd-side work that catches people in the exam and in production. List as preferred at the mid-tier, optional at senior, because by senior the production experience matters more than the credential.

Certified Kubernetes Application Developer (CKAD). Less useful than CKA for SRE specifically. CKAD targets developers deploying onto Kubernetes. SREs need the administrator side. If a candidate has only CKAD, that is fine, but it is not a substitute for cluster ops experience.

AWS Certified DevOps Engineer Professional. Reasonable signal for SREs working in AWS-heavy shops. Covers CI/CD, monitoring, and the operational AWS surface area. Worth listing as preferred for senior roles on AWS stacks. Skip it for non-AWS environments.

GCP Professional Cloud DevOps Engineer. The Google-specific credential. Useful for SREs on GCP, more or less the same value as the AWS Pro for that environment. Particularly common at companies that adopted Kubernetes through GKE.

Microsoft Certified: DevOps Engineer Expert. Targets the Azure DevOps surface specifically. Useful in Azure-heavy shops, especially the regulated verticals where Microsoft has stronger compliance penetration.

One pattern from recent placements: SRE candidates with the CKA plus 6+ years of hands-on production infrastructure work close offers about 20% faster than candidates with either signal alone. The credential filters the pile. The production experience filters the offer. Skip required cert language entirely at the principal tier. By then the work history tells the story.

Interview Loop That Actually Screens for SRE Capability

The single most common interview-loop mistake we see is running an SRE candidate through four coding rounds and one cursory systems design conversation. That loop screens for software engineer. It does not screen for SRE.

One coding round. Sufficient for SRE roles. Pick a problem in the candidate’s stated primary language. Keep it under 60 minutes. The goal is to confirm the candidate can write code, not to evaluate them as a senior SWE. If your loop currently has three coding rounds, drop two.

One systems design round focused on reliability. Pick a real system you operate. Ask the candidate to design or critique its reliability properties. SLI selection. SLO target setting. Failure mode analysis. Dependency mapping. The cost of running the design at the next order of magnitude of traffic. Watch how they handle ambiguity. The questions they ask before sketching anything on the whiteboard, about scope, constraints, and which failure modes matter most, are themselves a screener that catches resume-padders inside the first ten minutes of the round. This is where senior SRE candidates separate themselves from senior backend candidates who happen to know Kubernetes.

One incident-response or post-mortem round. Walk the candidate through a real anonymized incident from your environment or a public post-mortem from a known company. Ask how they would have run the incident. Ask what action items they would write. Ask what they would have caught earlier. The shape of their answer is the signal. Strong candidates have opinions about blameless culture, action-item follow-through, and the dynamics of getting an action item from “in someone’s queue” to actually shipped.

One troubleshooting round. Live debug session against a known broken environment. Some companies use an internal sandbox cluster with seeded problems. Others use a recorded incident timeline and pause at decision points to ask the candidate what they would check next, what hypothesis they are forming, and which dashboard they would pull up before paging another engineer. The objective is to watch the candidate think under uncertainty, ask the right questions, and reach for the right tools without flinching at a red dashboard.

One organizational fit round. Hiring manager plus a senior engineer from the team. The conversation should cover on-call expectations specifically, error budget culture, the way the team handles competing roadmap and reliability work, and what the first 90 days actually look like. Skip the trivia. Use the time to make sure both sides understand the working agreement.

Five rounds. One coding. The shift from four-coding-plus-one-design to one-coding-plus-four-reliability is the single biggest loop change a hiring manager can make for SRE specifically. Candidates leave the loop more accurately calibrated. Offers land higher on average because the strongest reliability-focused engineers feel evaluated for what they actually do. Our IT desk has tracked offer-acceptance rates on SRE searches where the client adopted that loop shape against searches where the loop stayed coding-heavy. The accepted-offer rate ran 78% versus 51% across 22 placements. Small sample. Real signal.

Common Questions Hiring Managers Ask Us About SRE Searches

SRE versus DevOps versus platform engineer, where is the real line?

DevOps is a practice. SRE is a role. Platform engineer is a team. The three overlap, and the line you draw depends on how your org evolved.

DevOps describes a way of working across dev and ops, originally a cultural movement. SRE is the engineering role Google formalized for reliability work that uses production data to drive product decisions. Platform engineer often describes a team-shaped function building internal developer platforms. In practice many companies use the titles interchangeably and then discover during hiring that the candidates have different expectations. The fix is to specify the responsibilities, not just the title.

Do I really need a dedicated SRE if engineering is already on call?

Probably yes once you have more than 25 engineers, more than 30 services, or a customer SLA with a real penalty clause. Below that the work is real but can usually live as a half-time responsibility for a senior platform engineer.

The line clients cross is usually an outage with a board-visible cost, or the moment the platform team realizes they have been on primary pager for six months straight and the second-best engineer just gave notice. Both signals are late. The earlier signal is whether anyone has time to write the runbooks and post-mortems. If the honest answer is no, the org has already crossed the line, and the cost is showing up as unwritten incident reviews and quietly accumulating tech debt that nobody has time to file as a real ticket.

How long should an SRE search realistically take?

35 to 50 days for mid-level platform SRE in non-coastal metros when the JD names the stack and posts the comp band. Senior roles run 50 to 80 days. Customer reliability engineer roles run longer because the pool is smaller.

The variance is mostly JD quality and on-call clarity. Searches where the loop is coding-heavy and the on-call disclosure is buried run 90-plus days. Searches where the loop is reliability-focused and the comp band is posted close in our 35-day median. Geography matters less than the JD shape.

What is reasonable on-call expectation for an SRE in 2026?

One week of primary rotation every three to four weeks is the median we see. More than every other week is a retention risk. Less than once a month often means the SRE is not close enough to production.

The on-call differential matters too. Companies that pay an explicit on-call premium, even a modest one, close roles faster and retain SREs longer. The differential is signaling more than the dollar amount. It says the org acknowledges that carrying the pager has a real cost.

Contract, contract-to-hire, or direct hire for SRE roles?

Direct hire for almost all of them. SRE compounds value through institutional knowledge of your specific production environment, and contract SREs rarely get deep enough fast enough to be net-positive on reliability work.

Exceptions: pre-launch reliability hardening with a hard deadline, post-incident remediation projects scoped at 3-6 months, or platform migration work where the SRE is essentially functioning as a senior platform engineer with a reliability focus. Those fit contract-to-hire well. Hourly rates for senior SRE contractors run $135 to $185 in 2026 depending on metro and stack. Our contract staffing and direct hire staffing models both apply, and we will recommend one over the other on intake.

Should I include salary in the SRE posting?

Post the range. Twelve states now require it by law, postings with bands close 22% faster across our IT desk, and a $40K spread is plenty to signal the level without locking you into a number.

The hiring managers who push back are usually worried that posting a number tips the hand on offer negotiation. Candidates already know the market, and a posting without a range gets read as a posting where HR set the number before the role was scoped. The candidate who sees a real range knows where the conversation will land and self-selects in or out before either side has wasted an hour, which is the entire point.

Where does an SRE actually fit in our org chart?

Platform SREs usually report to the infrastructure or platform engineering leader. Product SREs report through the product engineering org with a dotted line to reliability. Customer reliability engineers report through customer success or solutions engineering.

The dotted line matters. Reliability work that sits in a silo, with no influence into the product roadmap, ends up either fighting for budget or quietly losing the SRE you just hired. The orgs that retain SREs are the ones where the error budget conversation has a real seat at planning meetings.

Bringing in Outside Help

If the search has been open more than 60 days, or if the JD has been rewritten twice without the loop changing, the bottleneck is rarely the candidate pool. It is the spec. KORE1’s IT staffing services practice runs SRE searches across site reliability engineer staffing at all three archetypes described above. Our 92% twelve-month retention rate on placed SREs is the metric we watch most carefully on this role, because SRE retention is fragile and the cost of a mis-hire is six months of compound reliability work that does not happen. We also run DevOps engineer staffing when the real need is the other side of the same line, and we will tell you which one to hire on intake.

If you want a second set of eyes on a JD before you post it, or you want to scope the right archetype before opening the requisition, reach out to our team. Twenty minutes on a call usually saves four weeks on the search.