System Design Interview Questions for Senior Engineers 2026

Last updated: May 8, 2026

System design interview questions for senior engineers in 2026 test distributed systems reasoning, trade-off articulation, and production-scale architecture judgment across 45- to 60-minute collaborative sessions, with AI infrastructure questions now standard at most major tech employers. The question bank below comes from debrief calls after real interview loops, not from other listicles.

I placed a staff engineer at a Series C fintech last quarter. Strong resume. Twelve years of backend experience, mostly Java and Go. He failed the system design round. The feedback from the hiring manager was one sentence: “He described components but never discussed trade-offs.” That’s the gap this guide exists to close. Not what to study. How to think through the answer in a way that reads as senior to the person across the table.

I’m Mike Carter at KORE1. I run engineering and IT staffing searches, which means I hear both sides of the system design round. Candidates tell me what they prepared. Hiring managers tell me what they scored. The distance between those two things is where most failures live. KORE1 collects a fee when a hire closes through us, which is the part where you factor in my bias and decide for yourself what’s useful. The prep framework below is accurate regardless.

What Changed in System Design Interviews Since 2024

Two shifts worth knowing about before you prep a single question.

The first is AI infrastructure. In 2024, maybe one in ten system design loops included an ML-adjacent question. In 2026, it’s closer to half. “Design a real-time recommendation engine with an ML pipeline” is not a machine learning interview question. It’s a systems question about serving latency, model versioning, feature stores, and fallback behavior when the model service is down. You don’t need to know how gradient descent works. You need to know how to deploy a model behind an API gateway with a 99.9% SLA and explain what happens when inference takes 400ms instead of 50ms.

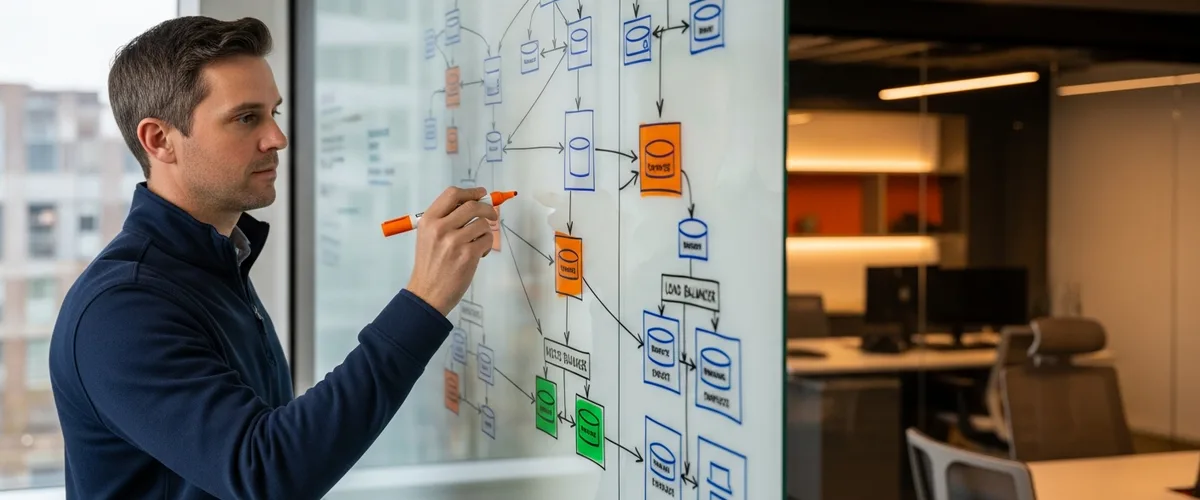

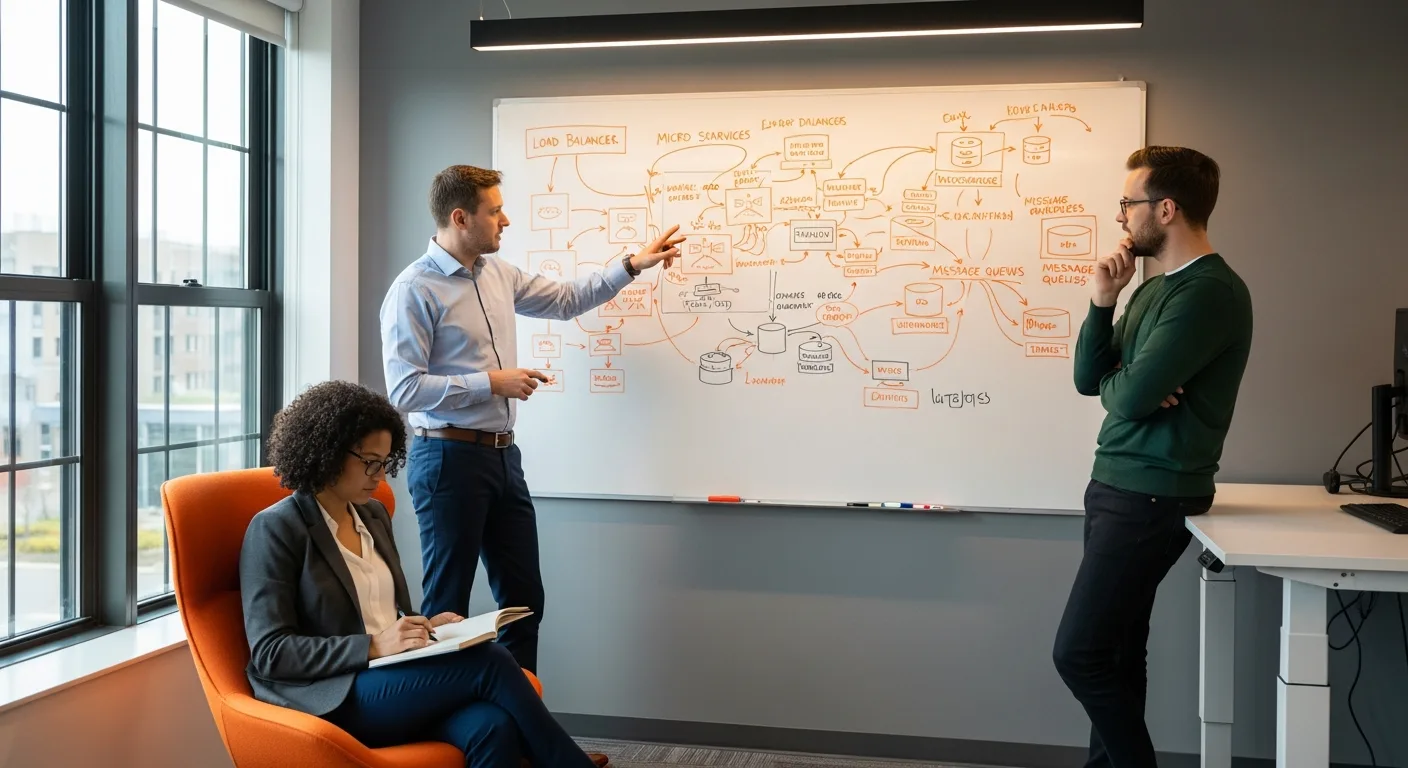

The second shift is that whiteboard-only rounds are mostly gone. Google returned to in-person interviews in 2026 to counter AI-assisted cheating, but even their format is collaborative now. You’re not drawing boxes while someone watches silently. You’re having a conversation with someone playing the role of a junior engineer or product manager who will push back on your assumptions. The interviewer wants to see how you respond when someone says “that won’t work at our scale” or “we tried that and it caused a thundering herd.” If your prep is memorizing component diagrams, you’re preparing for the wrong test.

The Bureau of Labor Statistics projects 15% software developer job growth through 2034, with approximately 129,200 annual openings. The competition for senior roles with system design fluency is real. KORE1’s average time-to-fill for senior backend and infrastructure roles sits at 17 days when the candidate pipeline is warm, but system design is the round where warm pipelines go cold. More offers die here than in any other interview stage for senior engineering hires.

The Scoring Framework Interviewers Actually Use

Most system design interviews score across four dimensions: requirements gathering, high-level architecture, component deep-dive, and trade-off analysis, with trade-off analysis weighted heaviest at the senior level.

Interviewers will sometimes tell candidates this at the start of the round, usually while framing it as “here’s what we’re looking for.” What they don’t tell you is how the weighting shifts by seniority.

| Scoring Dimension | Mid-Level Weight | Senior / Staff Weight | What Separates the Scores |

|---|---|---|---|

| Requirements gathering | 25% | 15% | Senior candidates are expected to ask the right clarifying questions without prompting. A mid-level candidate who asks good questions gets credit. A senior candidate who doesn’t loses points. |

| High-level architecture | 30% | 20% | Drawing boxes and arrows is baseline. Seniors need to justify why those boxes exist and what happens when one of them fails. |

| Component deep-dive | 25% | 25% | The interviewer picks one component and asks you to go three levels deeper. Database choice, caching strategy, message queue semantics. This is where preparation shows. |

| Trade-off analysis | 20% | 40% | Why SQL over NoSQL for this use case. Why eventual consistency is acceptable here but not there. What you’d sacrifice if the timeline got cut in half. This is the senior-level differentiator and where most candidates who “know the material” still lose. |

I had a candidate last year who drew a perfect architecture for a URL shortener in under ten minutes. Clean diagram. Named every component. The interviewer then asked: “What happens if your hash function produces a collision at 100 million URLs?” Silence. Not because the candidate didn’t know about hash collisions. Because he’d never been asked to reason about failure modes at scale in a live conversation. He’d studied the system. He hadn’t practiced defending it.

Distributed Systems Questions (The Core of Every Senior Loop)

These are the questions that appear in nearly every senior system design interview. Not because interviewers lack creativity. Because distributed systems problems expose whether a candidate has built real things or just read about them.

“Design a URL shortener like bit.ly.”

Sounds simple. It is not simple at senior scale. The junior version is a hash map with a redirect. At senior scale you’re talking about consistent hashing for distribution across multiple data stores, a collision resolution strategy that doesn’t degrade at billions of entries, an analytics ingestion path for click tracking that runs async so it doesn’t add latency to the redirect itself, and a cache invalidation strategy for expired or deleted links that actually propagates within your SLA window. The candidate I mentioned earlier failed here specifically because he treated the cache as a solved problem: “I’d put Redis in front.” The interviewer’s follow-up: “Your Redis cluster has 50GB of short URLs cached. A popular link gets deleted. How fast does that propagation need to happen and what’s your strategy?” That’s a senior question.

“Design a distributed message queue like Kafka.”

The question underneath the question: do you know the difference between at-most-once, at-least-once, and exactly-once delivery semantics, and can you explain, in a way that a product manager would follow, why exactly-once is so hard that most production systems including Kafka itself settle for at-least-once with idempotent consumers as the practical compromise? The candidate who says “Kafka already handles this” is not answering the question. The candidate who explains consumer group rebalancing, partition assignment, offset management, and what happens during a broker failure while a consumer is mid-batch processing is answering the question. I’ve seen strong candidates spend fifteen minutes on the partition strategy alone because the interviewer kept pushing. Good sign. It means the interviewer thinks you can go deeper.

“Design a rate limiter for a high-traffic API.”

Token bucket or sliding window. Most candidates get there. The senior-level follow-ups are where it gets real. “Your rate limiter runs across 12 API gateway instances. How do you maintain a consistent count?” Distributed rate limiting requires either a centralized counter, which introduces a single point of failure and adds latency, or a local-plus-sync approach, which allows temporary over-admission. Which one you pick depends on whether you’re protecting a payment API where over-admission means financial loss or a social feed API where a brief burst above the limit is acceptable. The answer is never “use Redis.” The answer is “use Redis for the centralized counter approach, and here’s what I accept as a trade-off in terms of added p99 latency.”

Data and Storage Architecture Questions

Storage questions have gotten harder since 2024. Not because the fundamentals changed. Because interviewers realized that “I’d use PostgreSQL” isn’t an answer. It’s a starting point, and interviewers have learned to push past it immediately.

“Design the data model for a social network with 500 million users.”

A graph problem dressed as a relational problem, or vice versa, depending on what you optimize for. The news feed requires fan-out-on-write or fan-out-on-read, and both have well-documented trade-offs. Fan-out-on-write precomputes feeds, uses more storage, and handles celebrity accounts badly because a user with 10 million followers generates 10 million write operations per post. Fan-out-on-read is cheaper on writes but slower on reads and requires a caching layer sophisticated enough to assemble a feed from multiple sources in under 200ms. Twitter’s original architecture used fan-out-on-write and eventually moved to a hybrid model. That’s a data point worth mentioning in the interview because it demonstrates you’ve studied real implementations, not just theory.

“You have a table with 2 billion rows and queries are taking 30 seconds. Walk me through your diagnosis.”

Not a trick question. A diagnostic question. The answer is not “add an index.” The answer starts with: what does the query look like, what’s the execution plan, are there full table scans, is the working set larger than available memory, is there lock contention from concurrent writes, and is this a read-heavy or write-heavy workload. The solution might be indexing. Or partitioning. Or sharding. Or moving the analytical queries to a read replica. Or accepting that this query should be precomputed and served from a materialized view. The interviewer is testing your diagnostic process, not your knowledge of database tuning parameters.

One of our candidates answered this question by asking the interviewer three clarifying questions before proposing anything. First: “Is this an OLTP or OLAP workload?” Second: “What’s the write volume per second?” Third: “Is this on a managed service like RDS or self-hosted?” The hiring manager told me afterward that those three questions alone moved the candidate from “maybe” to “strong yes” because most candidates jump straight to solutions without understanding the constraints.

API and Service Architecture Questions

“Design a notification system that delivers push, email, and SMS notifications for an e-commerce platform.”

The deceptive simplicity of this question catches people. Three delivery channels. Multiple event types: order confirmation, shipping update, price drop alert, abandoned cart reminder. User preferences per channel per event type. Rate limiting so a user doesn’t get nine emails in ten minutes during a flash sale. Retry logic that handles SMS gateway failures differently from push notification failures because an undelivered push disappears while an undelivered SMS might arrive six hours later out of context.

Where senior candidates separate: the priority queue. Not all notifications are equal. An order confirmation must arrive within seconds. A price drop alert can wait. An abandoned cart email should not fire if the user completed the purchase between the time the event was generated and the time the email would be sent. That last one, the consistency check at send time, is the piece most candidates miss entirely. We had a candidate who caught it unprompted in a Meta loop. Got the offer.

“Design an API gateway for a microservices architecture with 200 services.”

Two hundred services means two hundred potential failure points, two hundred different rate-limiting policies, authentication flows that might vary by service, and a routing layer that needs to handle schema versioning across all of them without downtime. The real question hiding inside this question is: how do you handle partial failures? Service A depends on Service B which depends on Service C. Service C is down. What does the user see? The circuit breaker pattern is the textbook answer. The senior answer includes: what the fallback behavior looks like for each degraded path, how you define the threshold for tripping the circuit breaker, and how you test this in staging when you can’t actually take down production services to validate your fallback logic.

AI Infrastructure Questions: The 2026 Addition

System design interviews at major tech companies in 2026 now routinely include AI infrastructure questions that test architecture judgment around ML serving, not ML theory.

Two years ago this section would have been a footnote, maybe a single paragraph about “emerging trends.” It exists as a full section now because every company with a product roadmap has an ML component, and the engineers building the infrastructure around those models need to understand latency budgets, model versioning, A/B testing at the inference layer, and graceful degradation when GPU capacity runs thin.

“Design the serving infrastructure for a large language model API.”

The question every candidate expects in 2026 and most still answer poorly. The failure mode is talking about the model itself. Interviewers don’t care whether you know how transformers work. They care whether you can design a system that handles 10,000 concurrent requests to a model that takes 2-8 seconds per inference, with variable output length, on GPU instances that cost $3/hour each.

What actually matters in this design: a request queue with priority tiers so paid users get lower latency than free-tier, token-level streaming so the user starts seeing output before the full generation completes, and an autoscaling strategy that accounts for the fact that GPU instances take 3 to 7 minutes to warm up, not milliseconds like CPU instances. You also need a semantic caching layer for identical or near-identical prompts, which is harder than it sounds because “near-identical” requires its own similarity threshold. KV cache management across requests. Batching strategies that balance throughput against latency. What happens when you’re at capacity and a new request arrives: do you queue it, reject it, or route it to a smaller model?

“Design a real-time recommendation system with an ML pipeline.”

Feature stores. That’s the answer most candidates miss. The recommendation model needs features computed from user behavior in the last five minutes, the last hour, and the last 30 days. Those three time horizons have different computation costs and freshness requirements. Real-time features come from a streaming pipeline, probably Kafka plus Flink. Near-real-time features come from a batch job that runs every few minutes. Historical features come from a daily batch. The feature store unifies all three behind a single API so the model doesn’t need to know where its inputs came from.

The candidate who describes the ML model and skips the feature store has never built a recommendation system in production. The one who starts with the feature store and works outward probably has. Interviewers know this.

Scalability and Reliability Questions

“Design a CDN.”

Content delivery networks are a system design staple, but the senior version goes well beyond “put servers close to users.” Cache invalidation strategy. How do you handle a cache miss at an edge node: pull from origin, pull from a nearby peer node, or both? How do you handle a thundering herd when a popular piece of content expires simultaneously across all edge nodes? DNS-based routing versus anycast. TLS termination at the edge versus at the origin. The storage layer at each edge node: is it SSD-backed or memory-backed, and what’s the eviction policy when storage fills up?

A fintech client of ours uses this question specifically because it tests geographic distribution thinking. They operate in 14 countries. They want to know if a candidate can reason about data sovereignty constraints, because caching user data at an edge node in a country with different privacy laws than the origin is a real compliance problem, not just a performance optimization.

“Your service handles 50,000 requests per second. Walk me through how you’d handle a 10x traffic spike during a product launch.”

The wrong answer is “autoscaling.” Not because autoscaling is wrong. Because by the time your auto-scaling group detects the spike, provisions new instances, passes health checks, and starts serving traffic, the damage is already done and your users have seen error pages for three to five minutes. Going from 50K to 500K RPS in minutes means you need capacity that’s either pre-provisioned or borrowable from somewhere. Load shedding: which requests do you drop first? Connection pooling limits. Database connection exhaustion, which is usually what actually breaks first. Queue depth limits to prevent cascading failures. The candidate who says “I’d set up an auto-scaling group with a target tracking policy on CPU utilization” is describing the steady-state solution, not the spike solution. The candidate who says “I’d pre-provision 3x headroom before the launch, implement load shedding at the API gateway layer with priority tiers, set database connection pool limits explicitly, and have a kill switch for non-critical features” is describing what actually works.

How to Structure Your Answer: The 5-Minute Framework

There are several published frameworks. RESHADED. DDIA-based approaches. Company-specific rubrics. Most of them overcomplicate it.

Spend the first five minutes on requirements. Not two minutes. Five. Ask about scale. How many users. How many requests per second. What’s the read-to-write ratio, because a system that’s 95% reads and 5% writes has a fundamentally different architecture than one that’s 50/50, and most candidates never ask this question even though it changes almost every downstream decision. Ask about consistency requirements: does the user need to see their own write immediately, or is eventual consistency acceptable? Ask about latency targets: is this a 50ms API or a “complete within 30 seconds” batch job? Ask about the failure budget: is 99.9% uptime sufficient, or does this need five nines?

Then draw the high-level architecture. Ten minutes. Don’t start with the database. Start with the user request and trace it through the system. Load balancer. API gateway. Service layer. Data layer. Cache layer. Async processing if applicable.

Then go deep on one component. The interviewer will usually pick it for you. “Tell me more about your caching strategy.” Or “walk me through the database schema.” Fifteen minutes. This is where your real experience shows.

Then wrap with trade-offs. What would you change if the budget were half? What would you change if the latency target were 10x stricter? What’s the weakest point in this design and what’s your monitoring strategy for catching it before users notice?

Mistakes That Cost Offers

Sourced from debrief calls across 40+ engineering searches in the past year. These are not hypothetical. They each describe an actual candidate who lost an actual offer.

Starting with the database schema before understanding requirements. A candidate for a solutions architect role paying $195K opened with “I’d use PostgreSQL with this table structure” before asking a single question about the system’s constraints. The interviewer flagged it as “solution-first thinking” and it colored the rest of the evaluation. Requirements first. Always.

Naming technologies without explaining why. “I’d use Kafka for the message queue, Redis for caching, and Elasticsearch for search.” That’s a shopping list. The interviewer wants to hear: “I’d use Kafka here because we need guaranteed ordering within a partition and the ability to replay events for debugging, and the consumer group model maps cleanly to our service topology where each downstream service processes events independently.” Same technology. Different signal.

Ignoring failure modes. Every system fails. The candidate who designs only for the happy path is telling the interviewer they’ve never been on-call. “What happens when your primary database goes down?” is coming. “What happens when the network between your services has a 5% packet loss rate?” is coming. “What happens when your cache layer evicts the hottest key right before a traffic spike?” is coming. Have answers. Better yet, bring them up before the interviewer asks.

Treating the interviewer as an examiner instead of a collaborator. The best system design interviews feel like a pairing session. The interviewer drops hints. “What about consistency here?” is not a gotcha. It’s a nudge toward a trade-off they want to discuss. Candidates who respond defensively, who treat every question as a challenge rather than a collaboration signal, score lower even when their technical content is correct. We see this more with candidates coming from competitive coding backgrounds. System design is not a competition. It’s a conversation.

What Hiring Managers Actually Want From This Round

I asked seven hiring managers at companies we’ve placed with in the last six months what they’re really scoring for in the system design round. Not the official rubric. The actual thing they’re filtering on.

Five of seven said the same thing in different words: “I want to know if I can put this person in a room with a product manager and a junior engineer and they’ll drive the technical direction without creating a mess.” Not “do they know CAP theorem.” Do they know when to invoke it and when it’s irrelevant. Not “can they design a scalable system.” Can they explain why they made the choices they made to someone who doesn’t have their context.

One hiring manager at a healthcare SaaS company said: “The candidate who tells me they’d use eventual consistency for patient medication records is telling me they’ve never worked in healthcare.” Domain awareness matters. If you’re interviewing at a fintech company, your system design answer for a payment processing system should reflect that financial transactions are not the same as social media posts. Idempotency isn’t optional. Audit trails aren’t optional. The engineering manager interview at these companies will test for the same domain sensitivity.

KORE1 screens for this before submitting candidates through our software engineering staffing practice. It’s the difference between a 92% retention rate at twelve months and a candidate who passes the technical screen but flames out once they’re building real systems with real constraints and real users filing support tickets at 2 AM.

Questions Worth Practicing Even If They Don’t Get Asked

Four questions that build transferable reasoning even if your specific interview goes in a different direction.

“Design Google Docs.” Real-time collaboration. Operational transformation or CRDTs for conflict resolution. WebSocket connections at scale. The versioning and permissions model. This question exercises every major system design muscle at once.

“Design a payment processing system.” Idempotency. Distributed transactions. The saga pattern for multi-step workflows that might fail at any step. PCI compliance constraints that limit where cardholder data can be stored and how it traverses the network. Reconciliation processes that catch discrepancies between what the payment gateway says and what your database says.

“Design a search engine for an e-commerce platform.” Inverted index construction. Ranking algorithms that balance relevance with business objectives like margin and inventory levels. Typo tolerance. Faceted filtering. The personalization layer. How you handle a product catalog that changes 50,000 times per day without rebuilding the entire index.

“Design a monitoring and alerting system.” Metric ingestion at scale. Time-series storage. Alert routing. On-call rotation. The deduplication problem: how do you avoid paging someone 47 times for the same incident? Runbook integration. This question is underrepresented in prep materials but shows up frequently at companies running their own infrastructure.

Things People Ask About System Design Interviews

How long should I spend preparing for the system design round?

Three to four weeks of focused practice if you have production experience, longer if you’ve primarily worked on single-service applications. But the prep that matters isn’t reading. It’s practicing out loud. Explaining your design to another person, getting interrupted, adjusting on the fly. Reading “Designing Data-Intensive Applications” is valuable background. Talking through a design for 45 minutes while someone challenges your assumptions is the prep that actually transfers to the interview room. Mock interviews are worth three times their weight in study hours.

Do I need to memorize specific architectures for common questions?

No, and attempting it usually backfires. Interviewers detect rehearsed answers within the first two minutes. What you need is a mental model for each major system category: real-time communication systems, content delivery systems, data processing pipelines, transactional systems. If you understand the fundamental constraints and trade-offs for each category, you can derive a reasonable architecture for any specific question within it. The candidate who clearly memorized the “design Twitter” answer from a prep site but can’t adapt when the interviewer changes a constraint is in worse shape than the candidate who builds from principles.

What’s the difference between system design interviews at FAANG versus mid-size companies?

Scale of the expected answer, mostly. A system design question at Google or Meta implicitly assumes hundreds of millions of users, global distribution, and five-nines availability. The same question at a 500-person SaaS company might assume 100,000 users, a single cloud region, and 99.9% uptime. Both are valid. The reasoning process is identical. The specific decisions change. At FAANG, you’re expected to know the trade-offs between consistent hashing and range-based partitioning. At a mid-size company, you might get credit for knowing that partitioning is even necessary at their scale and being honest about where the complexity boundary sits.

Should I bring up cost considerations in my system design answer?

$14,000 per month in unnecessary AWS spend is what one of our placed candidates found in their first week after the company had been running oversized instances for a recommendation service that processed 200 requests per minute. Cost awareness signals production experience. Mention that your autoscaling policy needs a cost ceiling so a traffic spike doesn’t generate a $40K bill overnight. Mention that your caching strategy reduces database query volume by 80%, which directly affects the Aurora or RDS bill. Mention that you picked DynamoDB over Aurora for a specific workload partly because at their query volume, the on-demand pricing model was 60% cheaper than provisioned instances running at 15% utilization most of the day. The interviewer isn’t testing whether you can optimize a bill. They’re testing whether you think about systems as things that cost money to run, not just things that process requests.

How do AI-generated answers change how system design interviews are evaluated?

Significantly, and not in the direction most candidates expect. Google returned to in-person interviews partly because of AI-assisted cheating. But the bigger shift is that interviewers now probe harder for production experience because AI can generate plausible-sounding architectures. The follow-up questions have gotten sharper: “You said you’d use a write-ahead log. Have you implemented one? What went wrong?” or “You mentioned eventual consistency. Tell me about a time eventual consistency caused a user-visible bug in a system you owned.” If your system design answer sounds like it came from a well-organized blog post, expect the interviewer to push until they find the boundary between what you’ve read and what you’ve built. The boundary is the interesting part.

Ready to prep for your next system design interview or need help finding senior engineering roles where your architecture skills are the differentiator? Talk to our engineering team about what’s open in your stack and market.